The Radeon HD 5970: Completing AMD's Takeover of the High End GPU Market

by Ryan Smith on November 18, 2009 12:00 AM EST- Posted in

- GPUs

Radeon HD 5970 Eyefinity on 3 x 30" Displays: Playable at 7680 x 1600

TWiT's Colleen Kelly pestered me on twitter to run tests on a 3 x 30" Eyefinity setup. The problem with such a setup is twofold:

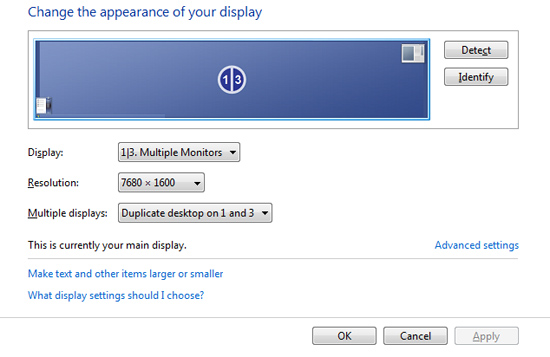

1) I was worried even a 5970 wouldn't be powerful enough to drive all three displays at their full resolution (a total of 7680 x 1600) in a modern game, and

2) The Radeon HD 5970's third video output is mini-DP only.

The second issue is bigger than you think, there are currently no 30" displays that accept a mini DP input, only regular DP. And to convert a miniDP to DP/DL-DVI, you need an active adapter, a bit more expensive than a standard converter cable. Apple makes such a cable and sells it for $99. The local store had one in stock, so I hopped in the batmobile and got one. Update: AMD tells us that in order to use all three outputs, regardless of resolution, you need to use an active adapter for the miniDP output because the card runs out of timing sources.

Below we have a passive mini-DP to single-link DVI adapter. This is only capable of driving a maximum of 1920 x 1200:

This cable works fine on the Radeon HD 5970, but I couldn't have one of my displays running at a non-native resolution.

Next is the $99 mini DP to dual-link DVI adapter. This box can drive a panel at full 2560 x 1600:

User reviews are pretty bad for this adapter, but thankfully I was using it with a 30" Apple Cinema Display. My experience, unlike those who attempt to use it with non-Apple monitors, was flawless. In fact, I had more issues with one of my Dell panels than this Apple. It turns out that one of my other DVI cables wasn't rated for dual-link operation and I got a bunch of vertical green lines whenever I tried to run the panel at 2560 x 1600. Check your cables if you're setting up such a beast, I accidentally grabbed one of my DVI cables for a 24" monitor and caused the problem.

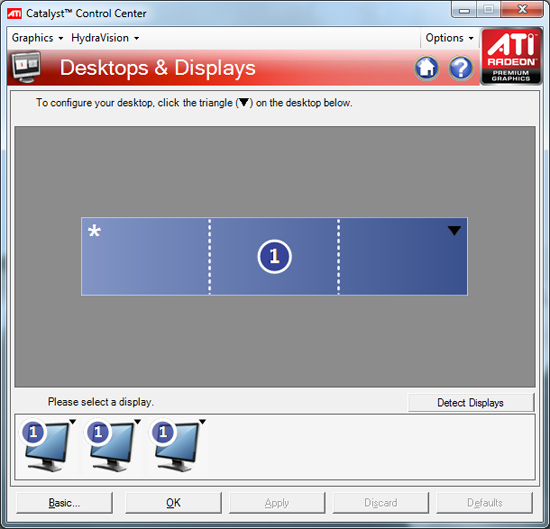

Windows detected all of the monitors, I used the Eyefinity configuration tool to arrange them properly, grabbed a few 7680 x 1600 wallpapers and was at a super wide Windows desktop.

The usual Eyefinity complaints apply here. My start menu was around 3 feet to the left of my head and the whole setup required nearly 6 feet of desk space.

In game menus and cutscenes are also mostly borked. They are fixed resolution/aspect ratio and end up getting stretched across all three 30" panels. Case in point is Call of Duty Modern Warfare 2:

While most games will run at the 7680 x 1600 resolution enumerated by ATI's driver, they don't know how to deal with the 48:10 aspect ratio of the setup (3 x 16:10 displays) and apply the appropriate field of vision adjustments. The majority of games will simply try to stretch the 16:10 content to the wider aspect ratio, resulting in a lot of short and fat characters on screen (or stretched characters in your periphery). Below is what MW2 looks like by default:

All of the characters look like they have legs that start at their knees. Thankfully there's a little tool out there that lets you automatically correct aspect ratio errors in some games. It's called Widescreen Fixer and you can get it here.

Select your game, desired aspect ratio and just leave it running in the background while you play. Hitting semicolon will enable/disable the aspect ratio correction and result in a totally playable, much less vomit inducing gaming experience. Below we have MW2 with correct aspect ratio/FOV:

Take note of how much more you can see as well as normal the characters now look. Unfortunately Widescreen Fixer only supports 11 games as of today, and four of them are Call of Duty titles:

Battlefield 2

Battlefield 2142

BioShock

Call of Duty 2

Call of Duty 4: Modern Warfare

Call of Duty: World at War

Call of Duty: Modern Warfare 2

Darkest of Days Demo

SEGA Rally Revo

Unreal Tournament

Wolfenstein

There's absolutely no reason for ATI not to have done this on its own. There's a donate link on the Widescreen Fixer website, the right thing for ATI to do would be to pay this developer for his work. He's doing the job ATI's Eyefinity engineers should have done from day one. Kudos to him, shame on ATI.

Performance with 3 x 30" displays really varies depending on the game. I ran through a portion of the MW2 single player campaign and saw an average frame rate of 30.9 fps with a minimum of 10 fps and a maximum of 50 fps. It was mostly playable on the Radeon HD 5970 without AA enabled, but not buttery smooth. Turning on 4X AA made Modern Warfare 2 crash in case you were wondering. The fact that a single card is even remotely capable of delivering a good experience at 7680 x 1600 is impressive.

I also noticed a strange issue where I couldn't get my video to sync upon any soft reboots. I'd need to shut down the entire system and turn it back on for me to see anything on the screen after a restart.

With corrected aspect ratios/FOV, gaming is ridiculous on such a wide setup. You really end up using your peripheral vision for what it was intended. The experience, even in an FPS, is much more immersive. Although I do stand by my original take on Eyefinity, the most engulfing gameplay is when you find yourself running through an open field and not in games that deal with more close quarters action.

Just for fun i decided to hook my entire 3 x 30" Eyefinity setup to a power meter and see how much power three 30" displays and a Core i7 system with a single Radeon HD 5970 would consume.

Under load while playing Modern Warfare 2 the entire system only used 517W. Which brings us to the next issue with Eyefinity on the 5970: most games will only use one of the GPUs. Enabling Eyefinity with Crossfire requires a ridiculous amount of data to be sent between the GPUs thanks to the ultra high resolutions supported. Doing this isn't quite that easy given some design tradeoffs made with Cypress (more on this in an upcoming article). Currently, only a limited number of titles will support the 5970 running in dual-GPU mode with Eyefinity. Dual-card owners (e.g. 5870 CF) are out of luck, the current drivers do not allow for CF and Eyefinity to work together. This will eventually get fixed, but it's going to take some time. With a second GPU running you can expect total system power consumption, including displays, to easily break 600W for the setup I used here.

The difficulties most games have with such a wide setup prevent 3 x 30" Eyefinity (or even any triple-monitor configuration) from being a real recommendation. If you have three monitors, sure, why not, but I don't believe it's anywhere near practical. Not until ATI gets a lot of the software compatibility taken care of.

114 Comments

View All Comments

Lennie - Wednesday, November 18, 2009 - link

If so, then one could suspect it's the same issue with games due to VRMs of this particular card getting heated up and throttling the card. Perhaps not enough contact between VRM and HSF or a complete lack of TIM on VRM by accident. I would have reseated the HSF if I owned that card.Rajinder Gill - Wednesday, November 18, 2009 - link

I suspect it is VRM/heat related. The 'biggest' slaves Volterra currently supply are rated at 45 amps each afaik. Assuming ATI used the 45 amp slaves (which they must have), you've got around 135 amps on tap. Do the math for OCP or any related throttling effects kicking in. Essentially, 1.10VGPU puts you at 150w per GPU before things either shut down or need to be throttled (depends on how it's implemented as it nears peak). Any which way you look at it, ATI have used a high end VRM solution, but 4 slaves per GPU would have given a bit more leeway on some cards. I wonder what the variance is in terms of leakage from card to card as well. Seeing as there's not much current overhead in the VRM (or at least there does not appear to be), a small change in leakage would be enough to stop some cards from doing too much in terms of overclocking on the stock cooler.later

Raja

Silverforce11 - Wednesday, November 18, 2009 - link

It could be your PSU, some "single rail" PSU arent in fact using a single rail but several rails with a max limit on AMPs. Its deceptive.Guru3D uses 1200W PSU and manages 900 core, which is typically what a 5870 OC to on air. Essentially the chips are higher quality cypress, maybe you should retry it again with a different PSU then conclusions can be drawn.

Bolas - Wednesday, November 18, 2009 - link

Yep, there is certainly a market for 5970CF. Can't wait!tajmahal - Wednesday, November 18, 2009 - link

Big deal, another paper launch where only a tiny handful of people will be able to get one.LedHed - Tuesday, November 24, 2009 - link

My question is why do the OC the 5970 but not the 295...We all know the 295 is memory bottlenecked at resolutions at/over 2560x1600

But considering the GTX 295 is down below $450 and no one can find these cards in stock with a god awful price of $600 ($100 more than 295 at launch).

mschira - Wednesday, November 18, 2009 - link

newegg list 5 different models, they come and go quite fast.I managed to get one of them in my shopping card.

All it would need now is pay. (which I don't want to...).

So yea they are not exactly easy to get, but far from impossible.

So not a paper launch.

Be real, it's day two after the launch, and you CAN get them. That's not bad at all.

M.

MrPickins - Wednesday, November 18, 2009 - link

At the moment, Newegg shows two different 5970's in stock. A HIS and a Powercolor.tajmahal - Wednesday, November 18, 2009 - link

Listed, but not available. I guess newegg sold both of the ones they had available, and the 5850 and 5870 ?......... not available either.Silverforce11 - Wednesday, November 18, 2009 - link

Plenty of 5870s around at retails and etailers, what do you mean by "another paper launch"?