The Radeon HD 5970: Completing AMD's Takeover of the High End GPU Market

by Ryan Smith on November 18, 2009 12:00 AM EST- Posted in

- GPUs

Radeon HD 5970 Eyefinity on 3 x 30" Displays: Playable at 7680 x 1600

TWiT's Colleen Kelly pestered me on twitter to run tests on a 3 x 30" Eyefinity setup. The problem with such a setup is twofold:

1) I was worried even a 5970 wouldn't be powerful enough to drive all three displays at their full resolution (a total of 7680 x 1600) in a modern game, and

2) The Radeon HD 5970's third video output is mini-DP only.

The second issue is bigger than you think, there are currently no 30" displays that accept a mini DP input, only regular DP. And to convert a miniDP to DP/DL-DVI, you need an active adapter, a bit more expensive than a standard converter cable. Apple makes such a cable and sells it for $99. The local store had one in stock, so I hopped in the batmobile and got one. Update: AMD tells us that in order to use all three outputs, regardless of resolution, you need to use an active adapter for the miniDP output because the card runs out of timing sources.

Below we have a passive mini-DP to single-link DVI adapter. This is only capable of driving a maximum of 1920 x 1200:

This cable works fine on the Radeon HD 5970, but I couldn't have one of my displays running at a non-native resolution.

Next is the $99 mini DP to dual-link DVI adapter. This box can drive a panel at full 2560 x 1600:

User reviews are pretty bad for this adapter, but thankfully I was using it with a 30" Apple Cinema Display. My experience, unlike those who attempt to use it with non-Apple monitors, was flawless. In fact, I had more issues with one of my Dell panels than this Apple. It turns out that one of my other DVI cables wasn't rated for dual-link operation and I got a bunch of vertical green lines whenever I tried to run the panel at 2560 x 1600. Check your cables if you're setting up such a beast, I accidentally grabbed one of my DVI cables for a 24" monitor and caused the problem.

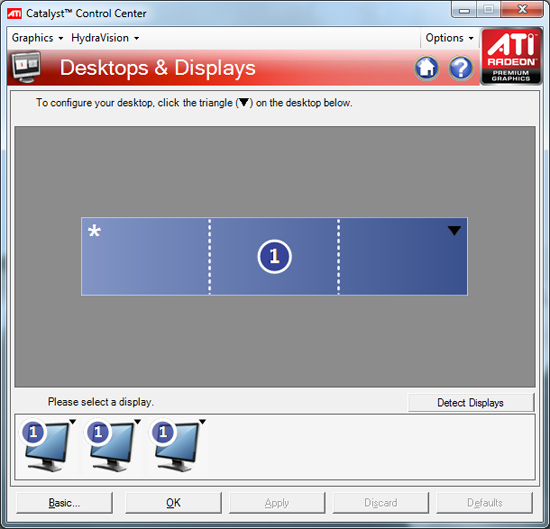

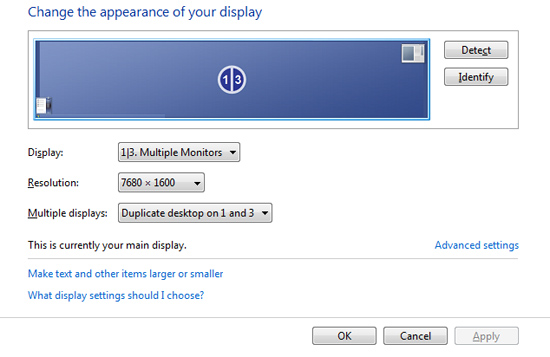

Windows detected all of the monitors, I used the Eyefinity configuration tool to arrange them properly, grabbed a few 7680 x 1600 wallpapers and was at a super wide Windows desktop.

The usual Eyefinity complaints apply here. My start menu was around 3 feet to the left of my head and the whole setup required nearly 6 feet of desk space.

In game menus and cutscenes are also mostly borked. They are fixed resolution/aspect ratio and end up getting stretched across all three 30" panels. Case in point is Call of Duty Modern Warfare 2:

While most games will run at the 7680 x 1600 resolution enumerated by ATI's driver, they don't know how to deal with the 48:10 aspect ratio of the setup (3 x 16:10 displays) and apply the appropriate field of vision adjustments. The majority of games will simply try to stretch the 16:10 content to the wider aspect ratio, resulting in a lot of short and fat characters on screen (or stretched characters in your periphery). Below is what MW2 looks like by default:

All of the characters look like they have legs that start at their knees. Thankfully there's a little tool out there that lets you automatically correct aspect ratio errors in some games. It's called Widescreen Fixer and you can get it here.

Select your game, desired aspect ratio and just leave it running in the background while you play. Hitting semicolon will enable/disable the aspect ratio correction and result in a totally playable, much less vomit inducing gaming experience. Below we have MW2 with correct aspect ratio/FOV:

Take note of how much more you can see as well as normal the characters now look. Unfortunately Widescreen Fixer only supports 11 games as of today, and four of them are Call of Duty titles:

Battlefield 2

Battlefield 2142

BioShock

Call of Duty 2

Call of Duty 4: Modern Warfare

Call of Duty: World at War

Call of Duty: Modern Warfare 2

Darkest of Days Demo

SEGA Rally Revo

Unreal Tournament

Wolfenstein

There's absolutely no reason for ATI not to have done this on its own. There's a donate link on the Widescreen Fixer website, the right thing for ATI to do would be to pay this developer for his work. He's doing the job ATI's Eyefinity engineers should have done from day one. Kudos to him, shame on ATI.

Performance with 3 x 30" displays really varies depending on the game. I ran through a portion of the MW2 single player campaign and saw an average frame rate of 30.9 fps with a minimum of 10 fps and a maximum of 50 fps. It was mostly playable on the Radeon HD 5970 without AA enabled, but not buttery smooth. Turning on 4X AA made Modern Warfare 2 crash in case you were wondering. The fact that a single card is even remotely capable of delivering a good experience at 7680 x 1600 is impressive.

I also noticed a strange issue where I couldn't get my video to sync upon any soft reboots. I'd need to shut down the entire system and turn it back on for me to see anything on the screen after a restart.

With corrected aspect ratios/FOV, gaming is ridiculous on such a wide setup. You really end up using your peripheral vision for what it was intended. The experience, even in an FPS, is much more immersive. Although I do stand by my original take on Eyefinity, the most engulfing gameplay is when you find yourself running through an open field and not in games that deal with more close quarters action.

Just for fun i decided to hook my entire 3 x 30" Eyefinity setup to a power meter and see how much power three 30" displays and a Core i7 system with a single Radeon HD 5970 would consume.

Under load while playing Modern Warfare 2 the entire system only used 517W. Which brings us to the next issue with Eyefinity on the 5970: most games will only use one of the GPUs. Enabling Eyefinity with Crossfire requires a ridiculous amount of data to be sent between the GPUs thanks to the ultra high resolutions supported. Doing this isn't quite that easy given some design tradeoffs made with Cypress (more on this in an upcoming article). Currently, only a limited number of titles will support the 5970 running in dual-GPU mode with Eyefinity. Dual-card owners (e.g. 5870 CF) are out of luck, the current drivers do not allow for CF and Eyefinity to work together. This will eventually get fixed, but it's going to take some time. With a second GPU running you can expect total system power consumption, including displays, to easily break 600W for the setup I used here.

The difficulties most games have with such a wide setup prevent 3 x 30" Eyefinity (or even any triple-monitor configuration) from being a real recommendation. If you have three monitors, sure, why not, but I don't believe it's anywhere near practical. Not until ATI gets a lot of the software compatibility taken care of.

114 Comments

View All Comments

tcube - Monday, March 1, 2010 - link

Well my thought is that if amd would release this card on SOI/HK-MG in 32/28nm MCM config at 4 GHz it would leave nvidia wondering what it did to deserve it. And I wonder ... why the heck not? These cards would be business-grade-able and with a decent silicon they could ask for enormous prices. Plus they could probably stick the entire thing in dual config on one single card (possibly within the 300 W pcie v2 limit)... that would be a 40-50 Tflops card and with the new GDDR5(5ghz +) it should qualify as a damn monster. I would also expect a ~2-3k$ per such beast but I think its worth it. 50Tf/card, 6cards/server... 3Pflop/cabinet... hrm... well...it wouldn't be fair to compair it to general purpose supercomputers... buuut you could deffinatelly ray trace render avatar directly into HD4x 3d in realtime and probably make it look even better in the process...srikar115 - Sunday, December 6, 2009 - link

i agree with this reveiw ,here a complete summary i found is also intrestinghttp://pcgamersera.com/2009/12/ati-radeon-5970-rev...">http://pcgamersera.com/2009/12/ati-radeon-5970-rev...

srikar115 - Tuesday, April 20, 2010 - link

http://pcgamersera.com/ati-radeon-5970-review-suma...xpclient - Friday, November 27, 2009 - link

What no test to check video performance/DXVA? DirectX 11/WDDM 1.1 introduced DXVA HD (Accelerated HD/Blu-Ray playback).cmdrdredd - Sunday, November 22, 2009 - link

Clearly nobody buying this card is going to put Crysis on "Gamer Quality" They'll put it on the max it can go. Why is AT still the only tech site in the whole world who is using "Gamer quality" with a card that has enough power to run a small town?AnnonymousCoward - Sunday, November 22, 2009 - link

Why does the 5970 get <= 5850 CF performance, when it has 3200 Stream Processors vs 2880?araczynski - Saturday, November 21, 2009 - link

i look forward to buying this, in a few years.JonnyDough - Friday, November 20, 2009 - link

why they didn't just call it the 5880.Paladin1211 - Friday, November 20, 2009 - link

Ryan,I'm a professional L4D player, and I know the Source engine gives out very high frame rate on today cards. The test become silly because there is no different at all from 60 to 300+ fps. So, it all comes down to min fps.

I suggest that you record a demo in map 5 Dead Air, with 4 Survivors defend with their back onto the limit line of the position of the first crashed plane. The main player for the record will be vomitted on by boomer, another throws pipe bomb near him, another throws molotov near him also. Full force of zombies (only 30), 2 hunters, 1 smoker, 1 tank attacking. (When a player become a tank, the boss he's controlling become a bot, and still attacking the survivors).

This is the heaviest practical scene in L4D, and it just makes sense for the benchmark. You dont really need 8 players to arrange the scene, I think using cheats is much easier.

I know it will take time to re-benchmark all of those cards for the new scene, but I think it wont be too much. Even if you cant do this, please reply me.

Thank you :)

SunSamurai - Friday, November 20, 2009 - link

You're not professional FPS player if you think there is no difference between 60 and 300fps.