NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

ECC Support

AMD's Radeon HD 5870 can detect errors on the memory bus, but it can't correct them. The register file, L1 cache, L2 cache and DRAM all have full ECC support in Fermi. This is one of those Tesla-specific features.

Many Tesla customers won't even talk to NVIDIA about moving their algorithms to GPUs unless NVIDIA can deliver ECC support. The scale of their installations is so large that ECC is absolutely necessary (or at least perceived to be).

Unified 64-bit Memory Addressing

In previous architectures there was a different load instruction depending on the type of memory: local (per thread), shared (per group of threads) or global (per kernel). This created issues with pointers and generally made a mess that programmers had to clean up.

Fermi unifies the address space so that there's only one instruction and the address of the memory is what determines where it's stored. The lowest bits are for local memory, the next set is for shared and then the remainder of the address space is global.

The unified address space is apparently necessary to enable C++ support for NVIDIA GPUs, which Fermi is designed to do.

The other big change to memory addressability is in the size of the address space. G80 and GT200 had a 32-bit address space, but next year NVIDIA expects to see Tesla boards with over 4GB of GDDR5 on board. Fermi now supports 64-bit addresses but the chip can physically address 40-bits of memory, or 1TB. That should be enough for now.

Both the unified address space and 64-bit addressing are almost exclusively for the compute space at this point. Consumer graphics cards won't need more than 4GB of memory for at least another couple of years. These changes were painful for NVIDIA to implement, and ultimately contributed to Fermi's delay, but necessary in NVIDIA's eyes.

New ISA Changes Enable DX11, OpenCL and C++, Visual Studio Support

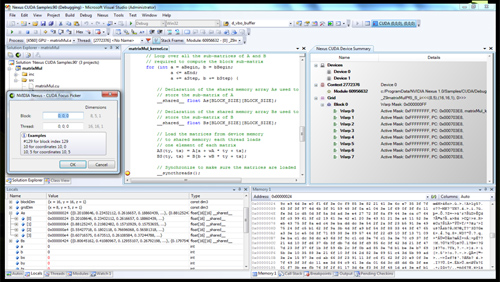

Now this is cool. NVIDIA is announcing Nexus (no, not the thing from Star Trek Generations) a visual studio plugin that enables hardware debugging for CUDA code in visual studio. You can treat the GPU like a CPU, step into functions, look at the state of the GPU all in visual studio with Nexus. This is a huge step forward for CUDA developers.

Nexus running in Visual Studio on a CUDA GPU

Simply enabling DX11 support is a big enough change for a GPU - AMD had to go through that with RV870. Fermi implements a wide set of changes to its ISA, primarily designed at enabling C++ support. Virtual functions, new/delete, try/catch are all parts of C++ and enabled on Fermi.

415 Comments

View All Comments

sandwiches - Thursday, October 1, 2009 - link

Just created an account to say that I've never seen this kind of sycophantic, schizophrenic blathering as Silicondoc. I have been an Nvidia user for the past 7 years or so (simply out of habit) and before that a Voodoo user and I cannot even begin to relate to this buffoon.Oh and to incense him even more, I'd like to add that I bought a HD5870 from newegg a couple nights ago since I needed to upgrade my old 8800 GTS card, NOW... not in a few months or next year. Now. Seeing as how the HD 5870 is the fastest for my buck, I went with that. Forget treating brands like religions. Get whatever's good and you can afford and forget brands. The end.

SiliconDoc - Friday, October 2, 2009 - link

LOL - another lifelong nobody who hasdn't a clue and was a green goblin by habit self reported, made another account, and came in just to "incense" "the debater".Really, you couldn't have done a better job of calling yourself a piece of trash.

Congratulations for that.

You admitted your goal was trolling and fanning the flames.

LOL

That was your goal, and so bright you are, that "sandwiches" came to mind as your name, a complimentary imitation, indicating you hoped to be equal to "Silicon" and decided to take a "tech part" styled name, or, perhaps as likely, it was haphazard chance cause buckey was hungry when he got here, and that's all he could think of.

I think you're another pile of stupid with the C and below crowd, basically.

So, that sort of explains your purchase, doesn't it ?

LOL

Yes, you can't possibly relate, you need more MHZ a lot bigger powr supply "sandwiches".

LOL

sandwiches!

CptTripps - Friday, October 2, 2009 - link

Reading your comments makes me think you are about 12 and in need of serious evaluation. I have never seen someone so out of control on Anand for 30+ pages. Seriously, get your brain checked out, there is some sort of imbalance going on when everyone in the whole world is a liar except you, the only beacon of truth.sandwiches - Friday, October 2, 2009 - link

LMAOThis has to be an online persona for you, Brian. Are you seriously like this in real life?

Voo - Thursday, October 1, 2009 - link

We really need a "ignore this idiot" button :/Anyways, is there any reason why this has to be a "one size fits them all" chip? I mean according to the article there's a lot of stuff in it, which only a minority of gamers would ever need (ECC memory? That's more expensive than normal memory and usually has a performance impact).

I mean there's already a workstation chip, why not a third one for GPU computing?

neomocos - Thursday, October 1, 2009 - link

You know when someone says you are drunk/stupid/drugged/crazy/etc... then you might question him but when 2 or 3 people say it then it`s most than probably true but when all the people at anand say it then SiliconDoc should just stfu, go right now and buy an ati 5870 and smash it on the ground and maby he will feel better and let us be.I vote for ban also :) and i donate 10$ for the 5870 we anand users will give him as a present for christmas.Happy new red year Silicon...

andrihb - Thursday, October 1, 2009 - link

I'm excited about this but I wish it was ready sooner. It looks like we'll have to wait 2-3 months for benchmarks, right?I hope it'll blow 5870 away because that's what is best for us, the consumers. We'll have an even faster GPU available to us which is all that really matters.

I've noticed that a person here has been criticizing this article for belittling the fact that nVidia's upcoming GPU is likely going to have a vastly suerior memory bandwidth to ATI's current flagship. Anand gave us the very limited data that exists at the moment and left most of the speculation to us. He doesn't emphasize that Fermi (which won't even be available for months) has far more bandwidth than ATI's current flagship. I contend that most people already suspected as much.

The vastly superior memmory bandwidth suggests that nVidia might just have a 5870 killer up it's sleeve. See what I just did there? This is called engaging in speculation. Anand could have done more of that, I agree, but saying that this is proof of Anand's supposed bias towards ATI? That is totally unreasonable.

Hey, Doc, do you want to see a real life, batshit crazy, foaming at the mouth fanboy? All you need is a mirror.

JonnyDough - Thursday, October 1, 2009 - link

"Jonah did step in to clarify. He believes that AMD's strategy simply boils down to targeting a different price point. He believes that the correct answer isn't to target a lower price point first, but rather build big chips efficiently. And build them so that you can scale to different sizes/configurations without having to redo a bunch of stuff. Putting on his marketing hat for a bit, Jonah said that NVIDIA is actively making investments in that direction. Perhaps Fermi will be different and it'll scale down to $199 and $299 price points with little effort? It seems doubtful, but we'll find out next year."FOOL!

So nVidia is going to make this for Tesla. That's great that they're innovating but you mentioned that those sales are only a small percentage. AMD went from competing strongly in the CPU market to dominating the GPU market. Good move. But if there's no existing market for the GPGPU...do you really want to be switching gears and trying to create one? Hmm. Crazy!

SiliconDoc - Thursday, October 1, 2009 - link

Admin: You've overstayed your welcome, goodbye.I'm not sure where you got your red rooster lies information. AMD/ati has NO DOMINATION in the GPU market.

---

One moment please to blow away your fantasy...

---

http://jonpeddie.com/press-releases/details/amd-so...">http://jonpeddie.com/press-releases/det...ntel-and...

---

Just in case you're ready to scream I cooked up a pure grteen biased link, check back a few pages and you'll see the liar who claimed ati is now profitable and holding up AMD because of it provided the REAL empty rhetoric fanboy link http://arstechnica.com/hardware/news/2009/07/intel...">http://arstechnica.com/hardware/news/20...-graphic...

which states

" The actual numbers that JPR gives are worth looking at,

which show INTEL dominates the GPU market !

Wow, what a surprise for you. I've just expanded your world, to something called "honest".

So:

INTEL 50.30%

NVIDIA 28.74%

AMD 18.13%

others below 1% each.

------------

Gee, now you know who dominates, and for our discussions here, who is IN LAST PLACE! AND THAT WOULD BE ATI THE LAST PLACE LOSER!

--

Now, I wouldn't mind something like ati is competitive, but that DOMINATES thing says it's #1, and ati is :

************* ATI IS IN LAST PLACE ! LAST PLACE! **************

Now please whine about the 3 listed at the link less than 1% each, so you can "pump up ati" by claiming "it's not last, which of course I would welcome, since it's much better than lying and claiming it's number one.

---

I suspect all you crying ban babies are ready to claim to have found absolutely zero information or contributioon in this post.

tamalero - Thursday, October 1, 2009 - link

huge difference between INTEGRATED GRAPHIC SEGMENT, wich is almost every laptop there and a lot of business computers.versus the DISCRETE MARKET, wich is the GAMING section... where AMD-ATI and NVIDIA are 100%.

get your facts and see a doctor, your delusional attitude is getting annoying.