NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

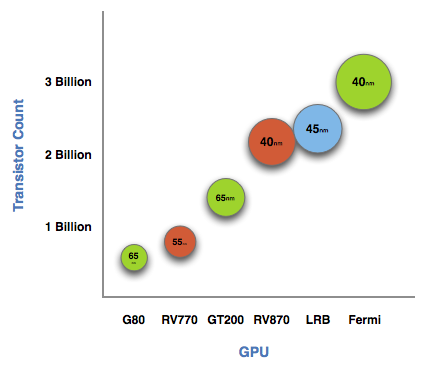

The graph below is one of transistor count, not die size. Inevitably, on the same manufacturing process, a significantly higher transistor count translates into a larger die size. But for the purposes of this article, all I need to show you is a representation of transistor count.

See that big circle on the right? That's Fermi. NVIDIA's next-generation architecture.

NVIDIA astonished us with GT200 tipping the scales at 1.4 billion transistors. Fermi is more than twice that at 3 billion. And literally, that's what Fermi is - more than twice a GT200.

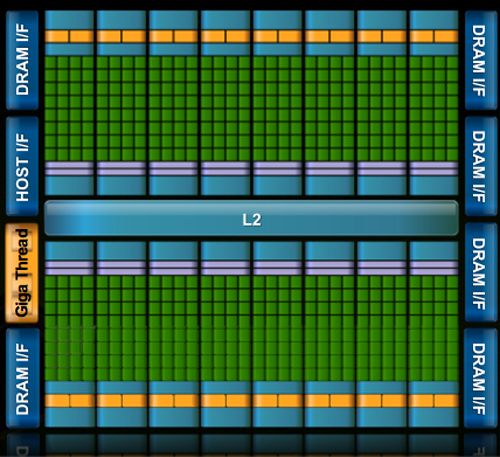

At the high level the specs are simple. Fermi has a 384-bit GDDR5 memory interface and 512 cores. That's more than twice the processing power of GT200 but, just like RV870 (Cypress), it's not twice the memory bandwidth.

The architecture goes much further than that, but NVIDIA believes that AMD has shown its cards (literally) and is very confident that Fermi will be faster. The questions are at what price and when.

The price is a valid concern. Fermi is a 40nm GPU just like RV870 but it has a 40% higher transistor count. Both are built at TSMC, so you can expect that Fermi will cost NVIDIA more to make than ATI's Radeon HD 5870.

Then timing is just as valid, because while Fermi currently exists on paper, it's not a product yet. Fermi is late. Clock speeds, configurations and price points have yet to be finalized. NVIDIA just recently got working chips back and it's going to be at least two months before I see the first samples. Widespread availability won't be until at least Q1 2010.

I asked two people at NVIDIA why Fermi is late; NVIDIA's VP of Product Marketing, Ujesh Desai and NVIDIA's VP of GPU Engineering, Jonah Alben. Ujesh responded: because designing GPUs this big is "fucking hard".

Jonah elaborated, as I will attempt to do here today.

415 Comments

View All Comments

Griswold - Wednesday, September 30, 2009 - link

Well, you have to consider that nvidia is getting between a rock and a hard place. The PC gaming market is shrinking. Theres not much point in making desktop chipsets anymore... they have to shift focus (and I'm sure they will focus) on new things like GPGPU. I wont be surprised if GT300 wont be a the super awesome gamer GPU of choice so many people expect it to be. And perhaps, the one after GT300 will be even less impressive for gaming, regardless of what they just said about making humongous chips for the high-end segment.SiliconDoc - Wednesday, September 30, 2009 - link

Gee nvidia is between a rock and a hard place, since they have an OUT, and ATI DOES NOT.lol

That was a GREAT JOB focusing on the wrong player who is between a rock and a hard place, and that player would be RED ROOSTER ATI !

--

no chipsets

no chance at TESLA sales in the billions to coleges and government and schools and research centers all ove the world....

--

buh bye ATI ! < what you should have actually "speculated"

...

But then, we know who you are and what you're about -

TELLING THE EXACT OPPSITE OF THE TRUTH, ALL FOR YOUR RED GOD, ATI !

--

silverblue - Thursday, October 1, 2009 - link

When nVidia actually sends out Fermi samples for previews/reviews, only then will you know how good it is. We all want to see it because we want competition and lower prices (and maybe some of us will buy one or more, as well!).Until then, keep your fanboy comments to yourself.

SiliconDoc - Thursday, October 1, 2009 - link

No silverblue, that is in fact your problem, not mine, as you won't know anything, till you're shown a lie or otherwise, and it's shoved into your tiny processor for your personal acceptance.The fact remains, red fanboy raver Griswold blew it, and I pointed out exactly WHY.

The fact that you cry about it, because you group stupid dummies keep blowing nearly every statement you make, sure isn't my fault.

silverblue - Thursday, October 1, 2009 - link

I wonder if you do actually read posts before you reply to them.SiliconDoc - Thursday, October 1, 2009 - link

Take your own advice, you pathetic hypocrit.ClownPuncher - Thursday, October 1, 2009 - link

Its actually "hypocrite".SiliconDoc - Friday, October 2, 2009 - link

It's "it's", you pathetic hypocrit.silverblue - Friday, October 2, 2009 - link

It's "hypocrite", you pathetic hypocrite.chizow - Wednesday, September 30, 2009 - link

Nvidia is simply hedging their bets and expanding their horizons. They've still managed to offer the fastest GPUs per product cycle/generation and they're clearly far more advanced than AMD when it comes to GPGPU in both theory and practice.Jensen's keynote tipped his hat numerous times to Nvidia's roots as a GPU company that designed chips to run 3D video games, but the focus of his presentation was clearly to sell it as more than that, as a cGPU capable of incredible computational ability.