Combating Input Lag and Final Words

Now that we know what's going on and what the factors are, what can we do about it?

Sometimes 1ms can be the difference between your input getting to the software in time to be included in the next frame. Most of the time it won't. Of course, the difference between 8ms and 2ms could actually make a frame of difference (up to 16.67ms) in input lag. A mouse that can handle 500 reports/second is what we recommend as a good balance.

It is possible to overclock your mouse. You will still be limited by the physical capabilities of the mouse, but running the USB port for the mouse at a higher rate can help, especially if you don't want to invest in a more expensive mouse. There are tools out there to both check your mouse report rate (with Direct Input Mouse Rate) and to change the rate by replacing the usb driver. When changing the rate on Vista SP1 or Windows 7, drivers will need to be signed. This can be accomplished by using testing signatures and forcing windows to load them. NGOHQ offers a good tutorial on this here.

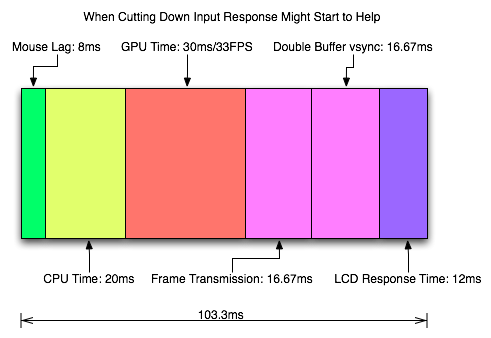

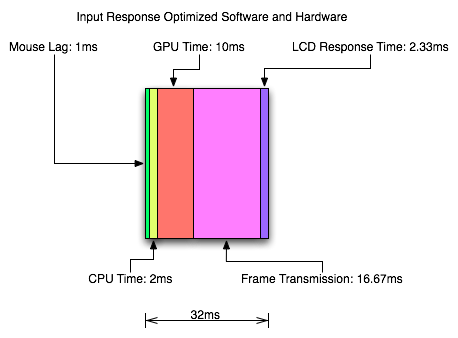

CPU, memory and GPU will impact the input lag between the mouse and the display. The GPU, as the main internal bottleneck in games, will likely have the largest single impact (higher framerate means less time between frames and less lag), but this is heavily dependent on game design. The basic recommendation is a modestly priced dual core CPU, inexpensive RAM, and a fast GPU. Faster CPUs and RAM could potentially benefit but will not likely provide a huge return on investment in this case.

For input lag reduction in the general case, we recommend disabling vsync. For NVIDIA card owners running OpenGL games, forcing triple buffering in the driver will provide a better visual experience with no tearing and will always start rendering the same frame that would start rendering with vsync disabled. Only input latency after the time we would see a tear in the frame would be longer, and this by less than a full frame of latency.

Unfortunately, all other implementations that call themselves triple buffering are actually one frame flip queues at this point. One frame render ahead is fine at framerates lower than the monitor refresh, but if the framerate ever goes past refresh you will experience much more input lag than with vsync alone. For everyone without multiGPU soluitons, we recommend setting flip queue or max pre-rendered frames to either 1 or 0. Set it to 1 if framerate is always less than monitor refresh and set it to 0 if framerate is always greater than or equal to monitor refresh. If it goes back and forth, only NVIDIA's OpenGL triple buffering will provide the best of both worlds without tearing and will further reduce input lag in high framerate situations.

Improperly handling vsync (enabling or disabling a 1 frame flip queue at the wrong time) can degrade performance by at least one additional whole frame. But with multiGPU options, we really don't have a choice. With more than one GPU in the system, you will want to leave maximum pre-rendered frames set to the default of 3 and allow the driver to handle everything. Input lag with multiGPU systems is something we will want to explore at a later time.

You will want a monitor that doesn't do much (if any) processing. Preferably with a "game" mode. We recently took a look at a few monitors to get a feel for the difference in input processing. While we didn't test it in this article, adding another 16ms to 33ms to input lag is just not a good idea.

One of the largest benefits to games that don't inherently carry a lot of input lag is refresh rate. A real 120Hz refresh rate can significantly benefit input lag especially in twitch shooters. While that impact would be less in games where the framerate can't keep up, the hail of additional frames that can be incurred between the computer and the monitor will still be significantly impacted. Additionally, vsync (even in the worst case) is much cheaper on a high refresh rate monitor. Triple buffering (or even 1 frame flip queues with performance lower than refresh) and 120Hz monitors are a match made in heaven.

Final Words

What started out as a short article on the concept of input lag ended up touching on quite a few key issues in gaming. We get into a few of the concepts of game design and program flow in addition to looking at hardware impact. While we hadn't planned on it, picking up a camera that can do 1200 FPS allowed us to actually measure the input lag of a couple real games.

There are quite a few good nuggets to take away. First, input lag is hugely dependent on the game. There will be games that optimize for reducing input lag and others that do not. In some games it is more important than others. For games that incur huge amounts of input lag, there is only so much that can be done. Using the tips we provided will definitely help get people on the path to lower input lag.

Unfortunately, sometimes reducing input lag to its minimum requires spending money especially on the display side of things. Just make sure to read reviews that look at display lag as avoiding a display that adds an extra 16ms to 33ms of input lag is definitely a good start. Beyond that, a faster GPU is the next most important upgrade, and a mouse that can do at least 500 reports per second is a good idea.

85 Comments

View All Comments

PrinceGaz - Friday, July 17, 2009 - link

Just to add"For input lag reduction in the general case, we recommend disabling vsync"

It is rather ironic that you used that phrase, when in the your previous article you were strongly stating the case for v-sync always being used (preferably with triple-buffering).

Unless you are certain that nVidia's OpenGL implementation of triple-buffering works how you think it does (and not how most people think it does), posting articles may be unwise.

DerekWilson - Sunday, July 19, 2009 - link

In the "general case" we mean /when triple buffering is not available/ (real triple buffering is not available in the majority of games), in order to reduce input lag, vsync should be disabled.Here's a better break down of what we recommend:

Above 60FPS:

triple buffering > no vsync > vsync > flip queue (any at all)

Always EXACTLY 60 FPS (very unlikely to be perfectly consistent naturally)

triple buffering == vsync == flip queue (1 frame) > no vsync

Below 60FPS (non-twitch shooter):

triple buffering == flip queue (1 frame) > no vsync > vsync

Below 60FPS (twitch shooter or where lag is a big issue):

no vsync >= triple buffering == flip queue (1 frame) > vsync

... to us the fact that at less than 60 FPS you start on the exact same frame with either triple buffering, no vsync or with a 1 frame flip queue is enough -- you will get less than 1 frame of difference in the lag and the difference you get with no vsync is only on part of the frame anyway.

...

And ...

NVIDIA has absolutely CONFIRMED that they do OpenGL triple buffering in the way that I described it in the previous article. This confirmation is directly from their OGL driver team.

I was just able to sit down with AMD two days ago and, while they don't do things in exactly the way I describe them, their OpenGL triple buffering does do something quite interesting at > 60FPS ... which I'll talk about in an upcoming article. They also said they are looking into what it would take to do what I was talking about -- but that they haven't yet because their workstation customers tend to care more about what happens at < 60fps (which is a case where a 1 frame flip queue == triple buffering).

The situation with DirectX is a little more restrictive with both NVIDIA and AMD... I will also address this issue in an upcoming article.

BoFox - Friday, July 17, 2009 - link

Anandtech does yet another ground-breaking article, thanks to Derek Wilson and the use of a high-speed camera this time!It is rather interesting how you mention the human reaction time! To detect and process the image signal in your brain, and to react to it (usually faster if through reflex), are all part of the entire reaction time of 200-300 ms, correct? Well, we should be testing it on the professional gamers like Jonathan 'Fatal1ty' Wendel, to see how much he has tuned his reaction time in making it so reflexive that it's as low as 150ms or even lower. Why not try interviewing him and use your high-speed camera to test that on him, and on an average gamer?!? That would be a SUPER-awesome article here to read!!! :D

About the rendering ahead, some games are designed to render ahead by as much as 3 frames, and changing that to 0 could incur a large performance hit--sometimes by as much as 50%. I have experienced this with some games a while back, cannot remember which ones exactly, and that was on a single video card (not when I was using SLI). The performance penalty would usually bring down the frame rates to below the refresh rate, thus making it very undesirable. There are some other articles cautioning against it, but as long as it does not make a difference in the game that you are playing, then great. At least, as long as it does not drop the frame rates to below the refresh rate, then great!

DerekWilson - Sunday, July 19, 2009 - link

If your framerate is above refresh rate (except for the multiGPU case) you should definitely not use anything more than 0 frames of render ahead. Triple buffering as I described in my previous article (and as NVIDIA does in OpenGL) will be a good option, but double buffered w/ vsync or no vsync will definitely be better than any sort of render ahead.If your performance is below refresh rate, you'll want to either use no vsync, exactly 1 frame render ahead, or triple buffering. double buffered vsync (0 flip queue/pre-rendered frames with vsync) will give you a significant drop in performance and increase in input lag when performance is below refresh. This is as you say -- by as much as 50%. But only when performance is already below refresh.

nvmarino - Friday, July 17, 2009 - link

Hey Derek, thanks for the great article. Nice job addressing many of the BS theories and comments about lag going on in the threads of your triple buffering article...And now I've got even more fuel for the fire of my lust for someone to release a 1920x1080 resolution display with support for 120Hz refresh rate at the INPUT and good black levels. If only I could set my PC to spit out 120Hz to my display, my 24Fps Blu-ray and 60Fps TV content would play without judder, AND my games would have zero tearing (well as long as they don't run above 120Fps) and minimal lag. Not only that, but I'd also have an ideal setup for shutter-based steroscopic 3d, and it would open the door for some of the new stuff like smoothing video via frame interpolation of 24P content to 120P currently going on in the PC videophile community. Can you say HTPC nirvana! Where's my 120Hz!!!!

DerekWilson - Friday, July 17, 2009 - link

yeah, i want a true 120hz 1920x1080 display too :-) mmmm that would be tasty.Belinda fox - Friday, July 17, 2009 - link

If someones reaction times are up to 220ms and your results are around the 100ms mark surely this says something about the validity of results and conclusion.Sorry i never read all the article just first and last one, due to reaction times being mentioned as part of lag.

BoFox - Friday, July 17, 2009 - link

Just read the article first.If you had better common sense, you would figure out that the human response time of >200ms comes AFTER seeing what is on the monitor, which takes 100ms to display in the first place.

Let's say that I appear just around the corner right in front of you, in a FPS game, like Unreal Tournament 3, for example. First, it takes like 50ms ping time for your computer to receive that information. Second, it takes your monitor 100ms to display the information. Third, it takes you 200ms to react to what you're seeing.

lyeoh - Sunday, July 19, 2009 - link

And AFTER you react to what you are seeing you press a key/button or move your mouse and the relevant portion of the input lag takes into effect (input device, game, network).With a 100+ms input lag handicap even on the LAN you could be "dead" before you even see anything or be able to do anything about it.

So would be good if have some figures on wireless keyboards and mice vs wired (usb and ps2).

I personally think wireless stuff will add a lot more lag due to the modulation/demodulation, and other "tricks" they have to do.

DerekWilson - Friday, July 17, 2009 - link

That is definitely an issue ...i didn't get into network play as it gets really sticky though ... the local client does do limited prediction based on last known info about other players -- if this prediction data is accurate enough at small intervals and if ping isn't bad then it isn't a huge issue ... but at the same time, servers may resolve hits (as apparent to the client) as hits even if they are misses (in reality as per what the player did) or it may not (the difference results in either increased or reduced effectiveness of techniques like bunny hopping). but it is way more complex and depends on how the client predicts things between server updates and how the server resolves differences between clients and all sorts of funky stuff.

that's why i wanted to stick with mouse-to-display issues here ...

but you are right that there are other factors (including human reaction time) that add up to make it so input lag can be an important slice of a whole chain of events and can affect the gaming experience.