ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

The Widespread Support Fallacy

NVIDIA acquired Ageia, they were the guys who wanted to sell you another card to put in your system to accelerate game physics - the PPU. That idea didn’t go over too well. For starters, no one wanted another *PU in their machine. And secondly, there were no compelling titles that required it. At best we saw mediocre games with mildly interesting physics support, or decent games with uninteresting physics enhancements.

Ageia’s true strength wasn’t in its PPU chip design, many companies could do that. What Ageia did that was quite smart was it acquired an up and coming game physics API, polished it up, and gave it away for free to developers. The physics engine was called PhysX.

Developers can use PhysX, for free, in their games. There are no strings attached, no licensing fees, nothing. Now if the developer wants support, there are fees of course but it’s a great way of cutting down development costs. The physics engine in a game is responsible for all modeling of newtonian forces within the game; the engine determines how objects collide, how gravity works, etc...

If developers wanted to, they could enable PPU accelerated physics in their games and do some cool effects. Very few developers wanted to because there was no real install base of Ageia cards and Ageia wasn’t large enough to convince the major players to do anything.

PhysX, being free, was of course widely adopted. When NVIDIA purchased Ageia what they really bought was the PhysX business.

NVIDIA continued offering PhysX for free, but it killed off the PPU business. Instead, NVIDIA worked to port PhysX to CUDA so that it could run on its GPUs. The same catch 22 from before existed: developers didn’t have to include GPU accelerated physics and most don’t because they don’t like alienating their non-NVIDIA users. It’s all about hitting the largest audience and not everyone can run GPU accelerated PhysX, so most developers don’t use that aspect of the engine.

Then we have NVIDIA publishing slides like this:

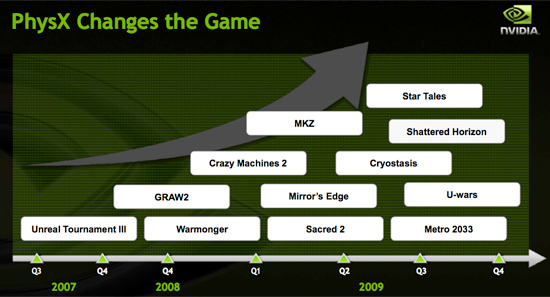

Indeed, PhysX is one of the world’s most popular physics APIs - but that does not mean that developers choose to accelerate PhysX on the GPU. Most don’t. The next slide paints a clearer picture:

These are the biggest titles NVIDIA has with GPU accelerated PhysX support today. That’s 12 titles, three of which are big ones, most of the rest, well, I won’t go there.

A free physics API is great, and all indicators point to PhysX being liked by developers.

The next several slides in NVIDIA’s presentation go into detail about how GPU accelerated PhysX is used in these titles and how poorly ATI performs when GPU accelerated PhysX is enabled (because ATI can’t run CUDA code on its GPUs, the GPU-friendly code must run on the CPU instead).

We normally hold manufacturers accountable to their performance claims, well it was about time we did something about these other claims - shall we?

Our goal was simple: we wanted to know if GPU accelerated PhysX effects in these titles was useful. And if it was, would it be enough to make us pick a NVIDIA GPU over an ATI one if the ATI GPU was faster.

To accomplish this I had to bring in an outsider. Someone who hadn’t been subjected to the same NVIDIA marketing that Derek and I had. I wanted someone impartial.

Meet Ben:

I met Ben in middle school and we’ve been friends ever since. He’s a gamer of the truest form. He generally just wants to come over to my office and game while I work. The relationship is rarely harmful; I have access to lots of hardware (both PC and console) and games, and he likes to play them. He plays while I work and isn't very distracting (except when he's hungry).

These past few weeks I’ve been far too busy for even Ben’s quiet gaming in the office. First there were SSDs, then GDC and then this article. But when I needed someone to play a bunch of games and tell me if he noticed GPU accelerated PhysX, Ben was the right guy for the job.

I grabbed a Dell Studio XPS I’d been working on for a while. It’s a good little system, the first sub-$1000 Core i7 machine in fact ($799 gets you a Core i7-920 and 3GB of memory). It performs similarly to my Core i7 testbeds so if you’re looking to jump on the i7 bandwagon but don’t feel like building a machine, the Dell is an alternative.

I also setup its bigger brother, the Studio XPS 435. Personally I prefer this machine, it’s larger than the regular Studio XPS, albeit more expensive. The larger chassis makes working inside the case and upgrading the graphics card a bit more pleasant.

My machine of choice, I couldn't let Ben have the faster computer.

Both of these systems shipped with ATI graphics, obviously that wasn’t going to work. I decided to pick midrange cards to work with: a GeForce GTS 250 and a GeForce GTX 260.

294 Comments

View All Comments

SiliconDoc - Tuesday, April 7, 2009 - link

Hey, you're the one with the lies and the cover-ups for ATI, and now the anti-semitic conspiracy theories.Even with all the spewing you've got going there, you couldn't just say " ati is really the one who lost money, not nvidia with the GT200".

Oh well, it's more important to spread FUD and now, conspiracy against "Jews".

Amazing. I had no idea the rabbit hole goes that deeply. rofl

helldrell666 - Wednesday, April 8, 2009 - link

Check for yourself.It's not a conspiracy, these are facts.In fact, the 4800 series cards are the most successful generations of cards ATI ever produced.The 4890 that measures about half the size of the gtx285, beats the later in most games at full HD resolutions.

Btw, where are you from?

SiliconDoc - Friday, April 24, 2009 - link

I guess you forgot about the pci mach64, and dummy there in between doesn't have a clue what that is.Let's see, another lie you told - ati is huge blah blah blah nvidia only 5,000 jobs...

http://finance.yahoo.com/q/bc?s=NVDA&t=5y&...">http://finance.yahoo.com/q/bc?s=NVDA&t=5y&...

3 billion, 4 billion 3.5 billion SALES WITH PROFITS !

http://finance.yahoo.com/q/is?s=NVDA&annual">http://finance.yahoo.com/q/is?s=NVDA&annual

NVIDIA IS ALREADY RECOVERING AND STOCK IS UP NICELY. SORRY RED FANS...

http://finance.yahoo.com/q/bc?s=AMD&t=5y">http://finance.yahoo.com/q/bc?s=AMD&t=5y

VERY BAD NUMBERS FOR AMD (ati only being a portion far less than half)

http://finance.yahoo.com/q/is?s=AMD&annual">http://finance.yahoo.com/q/is?s=AMD&annual

RED LOSSES 3 YEARS IN A ROW - OVER 2 BILLION EACH YEAR LAST TWO YEARS A BILLION OF WHICH IS ATI.

________________________________

Now tell me about employment or jobs ? Is that in the communist inflation reprint economy that costs us taxpayers trillions - the fantasy world where CONSTANT billion dollar losses on just a billion dollar company is "sustainable" ?

AMD/ATI IS IN DEADLY SERIOUS TROUBLE AND HAS BEEN

NVIDIA IS ALREADY RECOVERING AND HAS BEEN POSTING A PROFIT.

___________

But in your inaginary world filled with HATRED and LIES, it's just the opposite... isn't it.

How pathetic.

tamalero - Thursday, April 9, 2009 - link

I dont know, the 9700-9800 from ATI were amazing as well.SiliconDoc - Tuesday, April 7, 2009 - link

You cite "the last quarter", but of course only a fool would use that as a future indicator concerning quality and viability of the company. It's another pathetic attempt, fella. Global downturn means nothing to you, and you FAILED to cite the ati numbers, the two quarters in question, so you really have no point. You must have been afraid to tell the truth ?If Nvidia has one low quarter in the midst of massive global downturn, while ati had at least 9 quarters where they suffered losses in a row, who is really in danger of playing on the "competitors" chip ?

You see, that's WHY the ati red roosters had to SCREAM endlessly about nvidia's GT200 die size - because THE WHOLE TIME BEHIND THE SCENES OF THEIR FLAPPING RED ROOSTER BEAKS - THEIR BELOEVED ATI WAS LOSING BILLIONS....

See bub, that's what has been going on for far too long.

It's really sad and sick, that people can't be HONEST.

All the red roosters had to do was say " hey buy ati, they're in financial trouble and have been, we all want competition to continue so let's pitch in, because the brands are about equivalent. "

See, that would have been honest and respectable and manly.

Instead the raging red roosters lied and covered up and FALSELY ACCUSED their competition of imaginary losses while their little toy was bleeding half to death - like little lying brats, they couldn't help but spew in the midst of IMMENSE BILLION DOLLAR losses for ati, how the gt200 was "hurting nvidia" and how "ati could crsuh them" with PRICE DROPS -. lol - man alive i'm telling you - all those know it all red rooster jerks - it was and is still amazing.

That's fine, just be aware that it --- has been pathetic behavior.

Jamahl - Wednesday, April 8, 2009 - link

Actually, you were the one throwing around $billion losses and FAILED to mention Nvidia's own horrible financial situation. Did you say anything about the global downturn while ranting like a fanatic on AMD's losses?What was it you were saying about HONESTY again? Yes, in caps.

Nvidia hasn't had one low quarter - they've lost 2/3rds of their share value in a year. That doesn't happen in one quarter, same as it didn't happen to AMD in one quarter either.

Nvidia are a horrible little company who hold back progress, and more and more people are wising up to their methods. Articles like this on Anand show what they are like. Nvidia CANNOT COMPETE with ATI on performance so instead they bribe with more cash than ATI use on R&D, and those that don't accept the bribes get cajoled or threatened instead.

All the while sad sycophants like you are banging on about PhysX and cuda as if they make a difference to anyone. What does make a difference is their pathetic rebadging of ancient tech, catching out the people who don't know any better.

http://www.youtube.com/watch?v=a7-_Uj0o2aI&fea...">http://www.youtube.com/watch?v=a7-_Uj0o2aI&fea...

That just proves how far ahead the r700 is vs the g200b. ATI put money in research in order to improve the experience, while Nvidia put money into bribes in an attempt to hold onto whatever slender lead they have. It's only a financial lead, in tech terms ATI are a country mile ahead and only the worst Nvidia fanboi cant see that.

SiliconDoc - Friday, April 24, 2009 - link

" That just proves how far ahead the r700 is vs the g200b."That rv700 can't compete AT ALL with the GT200 UNLESS it has DDR5 on it.

That is a FACT. That is REALITY.

Without DDR5 it is the full core 4850 that competes with the "old technology" at the DDR3 level on both cards, the 4850 and the 9800 series and flavors.

That's the truth, YOU LIAR.

Case closed, no takebacks, no uncrossing your fingers, no removing your red raging horns - like - forever.

The r700 CAANOT COMPETE WITH THE GT200 - unless DDR5 is added as an advantage for the r700 which actually competes with the g80/g92/g92b.

NOW, if you screamed and schreeched DDR5 is awesome and ati rocks because they used it, I wouldn't disagree or call you the liar you are.

Got it son ?

Figure it out, or go take a college class in logic, and skip the communist training if you possibly can. Might get an estrogen emotion reduction as well while you're at it.

SiliconDoc - Friday, April 24, 2009 - link

Check the five year stock charts before you keep lying, and then as far as your idiotic rant about nvidia, it just goes to show there is no such thing as a fair performance comparison from you people, you will lie your red rooster rooter butts off because you have a big twisted insane hatred for Nvidia, based upon some communist like rage that profit is a sin, and money in the industry is BAD, except PEOPLE get paid with all that money you claim NV throws around. lolDude, you're a red rooster rager, look in the mirror and face it, snce you can't face the facts otherwise. Embrace it, and own it.

Don't be a liar, instead - or rather if all you're going to do is lie, at least admit it - you're body painted red, no matter what.

The really serious issue is ati has a really bad continuous loss, and might go under.

However, I can understand you communist like raging red roosters screaming for more price drops as you declare the much better off financially NVidia the one "to be destroyed", and demand more price drops, as you scream "profiteering".

Well, the basic fact is plain and apparent, ati had to lose 2 billion dollars to provide their competitive price, and ati purchasers are sitting on that loss, their gain, huh.

Like I said, if Obama and co. give ati/amd a couple billion in everyones taxes, it might work out ok, otherwise bankruptcy is looming - or some massive new investor relations are required.

Either way, you people don't tell the truth, and that of course is the point, over and over again.

JNo - Saturday, April 4, 2009 - link

Well having trawled through all 16 pages of comments I have to say that as much as power & temp benches, I really want NOISE benchmarks. Yes power usually comes at the expense of noise and although I'm primarily a gamer, I hate fan noise too.I happen to have a 8800GT which was great value when it first came out but it becomes a whirlwind in most games and it drives me crazy, breaks the immersion and only in ear headphones help.

When the scores are this close, I err on the side of silence and (from other sites) it sounds like the GTX275 is noticably quieter than the 4890 under load.

Also, the GTX275 may suck up more juice under load but it is also the same amount more economical when idle and as I spend way less than 50% of my computer time gaming, that is much more useful to me...

Agree that PhysX is overhyped promises at the moment. So, for sound and power efficiency, I think the GTX275 just sways the vote *for me*. And it can overclock a bit even if the impression I'm getting is not quite as much as the 4890.

Then again, here in the UK the prices are different. The new parts are £200+ and that's 33% more than the GTX260 55nm core216 which can be had for only £150 now and is only a little less powerful than the GTX275 and will surely last fine till the DX11 parts come out... choices... choices...

helldrell666 - Saturday, April 4, 2009 - link

You can edit your vga bios using the radeon bios editor v1.12, which is the one im using now on my 4870, and adjust the frequencies in different modes.By downlcocking your radeon card in idle mode, you can get it operate properly in idle mode without sucking so much power.you can use ATI tray tools also for the same purpose.As for the noise, i definitely recommend you to wait a little bit until the non-reference cards get released.

According to some sites, AMD is going to release its DX11 cards in Q3 this year, so if your planning to upgrade, you'd better consider waiting a little bit and get a far better card than the current available ones.

From my personal experience, a 4870 1gig is more than enough to play most current games at 24" resolutions with all the setts on their highest including the eye candy.......except for Crysis and Stalker clear sky....If you have a smaller monitor than mine, than you might as well consider a 4850 or a gts250....