ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

The Widespread Support Fallacy

NVIDIA acquired Ageia, they were the guys who wanted to sell you another card to put in your system to accelerate game physics - the PPU. That idea didn’t go over too well. For starters, no one wanted another *PU in their machine. And secondly, there were no compelling titles that required it. At best we saw mediocre games with mildly interesting physics support, or decent games with uninteresting physics enhancements.

Ageia’s true strength wasn’t in its PPU chip design, many companies could do that. What Ageia did that was quite smart was it acquired an up and coming game physics API, polished it up, and gave it away for free to developers. The physics engine was called PhysX.

Developers can use PhysX, for free, in their games. There are no strings attached, no licensing fees, nothing. Now if the developer wants support, there are fees of course but it’s a great way of cutting down development costs. The physics engine in a game is responsible for all modeling of newtonian forces within the game; the engine determines how objects collide, how gravity works, etc...

If developers wanted to, they could enable PPU accelerated physics in their games and do some cool effects. Very few developers wanted to because there was no real install base of Ageia cards and Ageia wasn’t large enough to convince the major players to do anything.

PhysX, being free, was of course widely adopted. When NVIDIA purchased Ageia what they really bought was the PhysX business.

NVIDIA continued offering PhysX for free, but it killed off the PPU business. Instead, NVIDIA worked to port PhysX to CUDA so that it could run on its GPUs. The same catch 22 from before existed: developers didn’t have to include GPU accelerated physics and most don’t because they don’t like alienating their non-NVIDIA users. It’s all about hitting the largest audience and not everyone can run GPU accelerated PhysX, so most developers don’t use that aspect of the engine.

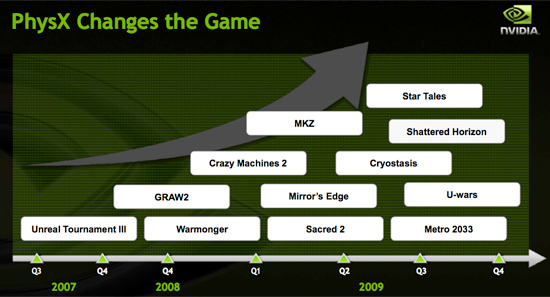

Then we have NVIDIA publishing slides like this:

Indeed, PhysX is one of the world’s most popular physics APIs - but that does not mean that developers choose to accelerate PhysX on the GPU. Most don’t. The next slide paints a clearer picture:

These are the biggest titles NVIDIA has with GPU accelerated PhysX support today. That’s 12 titles, three of which are big ones, most of the rest, well, I won’t go there.

A free physics API is great, and all indicators point to PhysX being liked by developers.

The next several slides in NVIDIA’s presentation go into detail about how GPU accelerated PhysX is used in these titles and how poorly ATI performs when GPU accelerated PhysX is enabled (because ATI can’t run CUDA code on its GPUs, the GPU-friendly code must run on the CPU instead).

We normally hold manufacturers accountable to their performance claims, well it was about time we did something about these other claims - shall we?

Our goal was simple: we wanted to know if GPU accelerated PhysX effects in these titles was useful. And if it was, would it be enough to make us pick a NVIDIA GPU over an ATI one if the ATI GPU was faster.

To accomplish this I had to bring in an outsider. Someone who hadn’t been subjected to the same NVIDIA marketing that Derek and I had. I wanted someone impartial.

Meet Ben:

I met Ben in middle school and we’ve been friends ever since. He’s a gamer of the truest form. He generally just wants to come over to my office and game while I work. The relationship is rarely harmful; I have access to lots of hardware (both PC and console) and games, and he likes to play them. He plays while I work and isn't very distracting (except when he's hungry).

These past few weeks I’ve been far too busy for even Ben’s quiet gaming in the office. First there were SSDs, then GDC and then this article. But when I needed someone to play a bunch of games and tell me if he noticed GPU accelerated PhysX, Ben was the right guy for the job.

I grabbed a Dell Studio XPS I’d been working on for a while. It’s a good little system, the first sub-$1000 Core i7 machine in fact ($799 gets you a Core i7-920 and 3GB of memory). It performs similarly to my Core i7 testbeds so if you’re looking to jump on the i7 bandwagon but don’t feel like building a machine, the Dell is an alternative.

I also setup its bigger brother, the Studio XPS 435. Personally I prefer this machine, it’s larger than the regular Studio XPS, albeit more expensive. The larger chassis makes working inside the case and upgrading the graphics card a bit more pleasant.

My machine of choice, I couldn't let Ben have the faster computer.

Both of these systems shipped with ATI graphics, obviously that wasn’t going to work. I decided to pick midrange cards to work with: a GeForce GTS 250 and a GeForce GTX 260.

294 Comments

View All Comments

helldrell666 - Wednesday, April 8, 2009 - link

Stick that fudzilla bench up in your.....Fudzilla is the number one nvidia troll site.Put aside, the fugglier nvidia sponored sites, like Hardocp, neoseeker, Driverheaven, Bjorn3d.................And you go on.

Your one ugly fugly creataure.

SiliconDoc - Wednesday, August 5, 2009 - link

helldrell666 - then here's another long set of wins for the GTX275 against the 4890 - you can go tell johnjames on pg. 29 to stick all of them up his... since you're so "red" with embarrassment...http://www.driverheaven.net/reviews.php?reviewid=7...">http://www.driverheaven.net/reviews.php?reviewid=7...

http://www.bit-tech.net/hardware/graphics/2009/04/...">http://www.bit-tech.net/hardware/graphi...03/radeo...

http://www.bjorn3d.com/read.php?cID=1539&pageI...">http://www.bjorn3d.com/read.php?cID=1539&pageI...

http://www.dailytech.com/422009+Daily+Hardware+Rev...">http://www.dailytech.com/422009+Daily+H...adeon+HD...

http://www.guru3d.com/article/geforce-gtx-275-revi...">http://www.guru3d.com/article/geforce-gtx-275-revi...

http://www.legitreviews.com/article/944/15/">http://www.legitreviews.com/article/944/15/

http://www.overclockersclub.com/reviews/nvidia_3d_...">http://www.overclockersclub.com/reviews/nvidia_3d_...

http://www.hardwarecanucks.com/forum/hardware-canu...">http://www.hardwarecanucks.com/forum/ha...a-geforc...

http://hothardware.com/Articles/NVIDIA-GeForce-GTX...">http://hothardware.com/Articles/NVIDIA-GeForce-GTX...

http://www.engadget.com/2009/04/02/nvidia-gtx-275-...">http://www.engadget.com/2009/04/02/nvid...275-ati-...

http://www.overclockersclub.com/reviews/nvidia_gtx...">http://www.overclockersclub.com/reviews/nvidia_gtx...

http://www.pcper.com/article.php?aid=684&type=...">http://www.pcper.com/article.php?aid=684&type=...

----

There you go - now I'm sure every one of those sights is filled with green bias, huh , the worst kind...

ROFLMAO

mav3rick - Wednesday, April 8, 2009 - link

ehehe.. he goes around name calling almost everyone a red rooster.. wonder if he sees himself as the green goblin? :Djokes aside.. seriously, why limit yourselves to just one camp?? why be fanboys? just get whatever card which give the most bang for your budget and also your needs. i started out with a Matrox G200 (eheh.. you can basically guess how 'young' i am). This was followed by the NVidia GeForce2 MX. The next upgrade was the Radeon 9500.. plus.. managed to unlock the 4 pipelines to make it the legendary 9700.. what more can you ask for. next came the X1800GTO, again managing to unlock 4 disabled pipelines and it became X1800XL. and before silicondoc calls me a red rooster.. lolz.. my current card is the 8800GT. real great buy with it oc capabilities and longevity..

currently with the flurry of releases, i'm targeting either the GTX 260+ or the 1GB 4870 as i only have a 22" flat screen. Performance between both cards are comparable. the difference of a few framerates are barely noticeable.. so its down to pricing. Waiting now to see if there are any further reductions :p As for PhysX, it doesnt seem to have really taken off yet.. game developers are not stupid. if they wanna sell more copies, they will have to make sure it works on as many cards as possible.

SiliconDoc - Friday, April 24, 2009 - link

Green Goblin, ahh... many that is ugly...Now, one problem with what you said - you definitely should note I don't call everyone a red rooster - just the liars - there are quite a few posts here that make fine points for ati that aren't steeped in bs up to their necks and over their heads.

You should also note I make the proper POINTS when I post, that prove what is and has been said by this brainwashed crew of red rooster just isn't the truth, period.

See that's the problem -

I certainly don't mind one bit if the truth is told, but if you have to lie so often to keep your favorite looking good, well, that's just stupidity besides being dishonest.

I've made quite a few points the in the box cluckers cannot effectively, fairly, or honestly counter - and the months and months of brainwashing won't unwind right away - but at least it's going into their noggins, and will eat away at the lies, little by little the small voice inside will spank their inner red stepchild into shape.

Hey, I'm a helpful person. Reality should win.

tamalero - Thursday, April 9, 2009 - link

dont forget the X800GTO2 that could unlock extra pipes to become X850XTJamahl - Tuesday, April 7, 2009 - link

Oh wait lol no they aren't. If they were they wouldn't have a failing chipset side of their business and revenues down from 1.2bn to 480m in the last quarter.They don't have an x86 licence and intel are suing them. How bad do you have to be for intel to sue lol. Oh yeah, on intel - that reminds me, who was it that took the playstation away from Nvidia again? Seeing as we're talking about consoles now, how about we talk about the 50 million wii's sold with ATI graphics inside?

Fusion is the party of the future and Nvidia aren't invited. To sum up, this desperate little company is going down the tubes and taking all of its clueless fanbois with it. It will be great in the near future when you are all forced to game on ATI graphics lol.

SiliconDoc - Tuesday, April 7, 2009 - link

January 2008 - and perhaps as bad or worse since then." Without the charge, AMD came close to breaking even in the quarter, listing an operating loss of $9 million. Revenue for Q4 was $1.77 billion, up 8% sequentially and virtually flat compared to Q4 2006. The ATI write-down resulted in a total loss of $3.38 billion for the year, with total non-cash charges adding up to $2.0 billion. 2007 sales were $6.0 billion, up 6$ from 2006. "

http://www.tgdaily.com/content/view/35669/118/">http://www.tgdaily.com/content/view/35669/118/

.

That looks like ATI writedown was 3.38 billion - gee, 2 billion loss for one year.

Oh well, they can crush nvidia with their tiny core vs nvidia's big core by dropping prices, right ?

LOL

Thanks for nothing but lies, red roosters.

SiliconDoc - Tuesday, April 7, 2009 - link

Oh and let's not forget the Dubai ownership portion of ati/amd.Great.

Not trusted for US ports by the American people, but fanboys love it anyway.

Great.

helldrell666 - Tuesday, April 7, 2009 - link

Yes, whether you believe me or not, it's true.I had had much more driver problems with my 8800gtx than what i have had with my current 4870.As for the arabs, they don't own the company.AMD just bought part of it's manufacturing business to the arabic company,ALMUBSDALA.

And to be more specific, AMD decided to share it's manufacturing business with another "foregin" company because it can't afford the manufacturing costs.

I don't see anything wrong with that.But in case you don't know, i have to give you some informations about who owns what.

Did you know that 2 russian "jewish" billionaires, "Naoom Birizofski and Jacob Trotski", own 79% of INTELs shares.

Did you know that JEWs own all the major technological companies in the WEST. From IBM, Ford, Boeing, Le Stabrolle, Shell..........to ADIDAS, Mercedes, OPEL, NPD Petrochemicals ......

Put aside the US Media, that is totally owned and controled by jewish businessmen..

The whole western civilization in it's all glory is getting purchased by jews.So if you think that those Arabs will/have any kind of effect on anything, then your wrong.

AMD was in big trouble and no other company offered any kind of help.AMD simply wasn't able to keep both it's manufacturing and designing business and make a revenue.

Now, with their manufacturing business is getting fixed and grown, the company is definitely in a better state.As you know, or maybe don't, the global foundaries is expanding now by building two other fabs in NY and Malta.Beside, they fixed and did a lot to AMDs to fabs in Germany. so what AMd has done is establishing a new manufacturing

entity, that will provide thousands of jobs for those great minds in both Europe and USA, and will compete efficiently with those Taiwanese manufacturing companies.

But you, with your stupid little brain, can't recognize all that.you think that your nvidia, that small little company with it's 5000 workers, is more important than anything else.

What about competition?

What about innovation?

what about prices? Are you aware of what the state of the prices of gpu, cpus... or any other products would become without competition?

SiliconDoc - Wednesday, April 8, 2009 - link

Here's the other thing, no matter how much you give your opinion on amd/ati, ati lost them billions, and nvidia, even though you pretend otherwise, actually "puts to work" more people than ati making and selling their gpu's, even if they don't have some holding corporation monster over the top of them, bleeding billions as well.So, you went into your love for amd, and I guess your love for ati, which according to you "saved the loser" called amd. Like I said, you're more fanboy than one could initially have surmised, and your bias is still bleeding out like mad, not to mention the crazy conspiracy talk.