MultiGPU Update: Two-GPU Options in Depth

by Derek Wilson on February 23, 2009 7:30 AM EST- Posted in

- GPUs

Crysis Warhead Analysis

Crysis Warhead, the sequel to the original that follows the same story from a different perspective, does a great job of improving on the Crysis engine in terms of balancing performance and improving playability with a still-forward-looking engine (though we lack the native 64-bit runtime of the original game). We push the settings pretty high in spite of the fact that we don't turn them all the way up. Everything is set to "Gamer" quality with the exception of Shaders which are set to "Enthusiast" level.

1680x1050 1920x1200 2560x1600

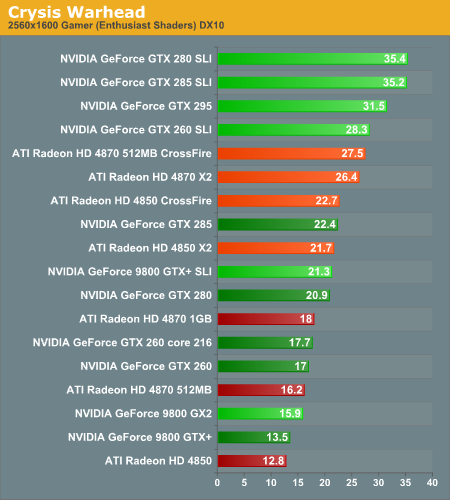

Like CoD, Crysis favors NVIDIA hardware. The settings we're rocking require more than a single Radeon HD 4850 or GeForce 9800 GTX+ even at 1680x1050, so it's likely that many gamers will be running at lower settings than these. As with CoD, SLI sweeps this benchmark in terms of performance. The Radeon HD 4870 CrossFire pushes up against the GeForce GTX 260 SLI setup, but the core 216 or an overclocked GTX 260 setup would easily put some distance between them.

1680x1050 1920x1200 2560x1600

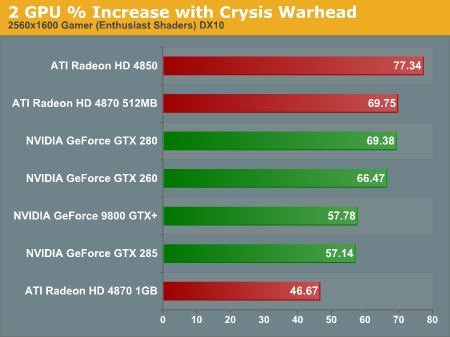

In terms of scaling, SLI looks better at lower resolutions while CrossFire puts the heat on as resolution increases. Despite the fact that the 4850 scales at over 77% (which is very good), the higher baseline of the NVIDIA cards keeps this from making the impact that it could. At the same time, configurations with two 4850 cards perform on par with the GTX 285 and offer much better value.

1680x1050 1920x1200 2560x1600

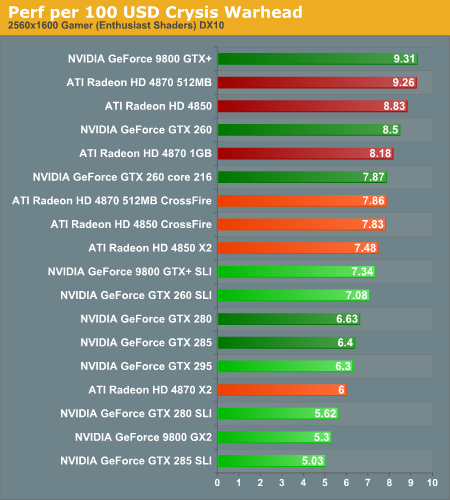

Our performance data and our value data show that in this case, AMD's approach to single card multiGPU on the high end is effective. The 4850 X2 2GB can be had from newegg for less than $300 which is more than $50 cheaper than a single GPU NVIDIA solution that gets you the same performance where it counts.

It's interesting to note that AMD two card solutions tended to scale better than the single card multi-GPU options here. Where memory is a limiter, we see our higher memory single card options scaling better. In this case, it looks like memory isn't as large a bottleneck as something else. We can't say for certain, but our guess is that it's the PCIe bus: with both cards getting a full x16 slot, each GPU is able to communicate more efficiently with the host and it seems that this is beneficial to Crysis performance.

At 1920x1200, the only single card solutions that remain playable are the GTX 280 and GTX 285. Getting good performance on a 30" monitor requires either a GTX 295 or 2x GTX 280/285s. Nothing else passes the test at the highest resolution we tested.

95 Comments

View All Comments

Hauk - Monday, February 23, 2009 - link

To you grammer police... get a life will ya?!?Who gives a rats ass! It's the data!

Your smug comments are of ZERO value here. You want to critique, go to a scholarly forum and do so.

Your whining is more of a distraction! How's that for gramaticly correct?

Slappi - Tuesday, February 24, 2009 - link

It should be grammar not grammer.

SiliconDoc - Wednesday, March 18, 2009 - link

Grammatically was also spelled incorrectly.lol

The0ne - Monday, February 23, 2009 - link

"In general, more than one GPU isn't that necessary for 1920x1200 with the highest quality settings,..."I see many computer setups with 22" LCDs and lower that have high end graphic cards. It just doesn't make sense to have a high end card when you're not utilizing the whole potential. Might as well save some money up front and if you do need more power, for higher resolutions later, you can always purchase an upgrade at a lower cost. Heck, most of the time there will be new models out :)

Then again, I have a qaud-core CPU that I don't utilize too but... :D

7Enigma - Monday, February 23, 2009 - link

Everyone's situation is unique. In my case I just built a nice C2D system (OC'd to 3.8GHz with a lot of breathing room up top). I have a 4870 512meg that is definitely overkill with my massive 19" LCD (1280X1024). But within the year I plan on giving my dad or wife my 19" and going to a 22-24". Using your logic I should have purchased a 4850 (or even 4830) since I don't NEED the power. But I did plan ahead to future proof my system for when I can benefit from the 4870.I think many people also don't upgrade their systems near as frequently as some of the enthusiasts do. So we spend a bit more than we would need to at that particular time to futureproof a year or two ahead.

Different strokes and all that...

strikeback03 - Monday, February 23, 2009 - link

The other side of the coin is that most likely for similar money, you could have bought something now that more closely matches your needs, and a 4870 in a year once it has been replaced by a new card if it still meets your needs.7Enigma - Tuesday, February 24, 2009 - link

Of course. Or I could spend $60 now, another $60 in 3 months, and you see the point. It's all dependant on your actual need, your perceived need, and your desire to not have to upgrade frequently.I think the 4870 is one of those cards like the ATI 9800pro that has a perfect combination of price and performance to be a very good performer for the long haul (similarly to how the 8800GTS was probably the best part from a price/performance/longevity standpoint if you were to buy it the day it first came out).

Also important is looking at both companies and seeing what they are releasing in the next 3-6 months for your/my particular price range. Everything coming out seems to be focused either on the super high end, or the low end. I don't see any significant mid-range pieces coming out in the next 3-6 months that would have made me regret my purchase. If it was late summer or fall and I knew the next round of cards were coming out I *may* have opted for a 9600GT or other lower-midrange card to hold over until the next big thing but as it stands I'll get easily a year out of my card before I even feel the need to upgrade.

Frankly the difference between 70fps and 100fps at the resolutions I would be playing (my upgrade would be either to a 22 or 24") is pretty moot.

armandbr - Monday, February 23, 2009 - link

http://www.xbitlabs.com/articles/video/display/rad...">http://www.xbitlabs.com/articles/video/display/rad...here you go

Denithor - Monday, February 23, 2009 - link

Second paragraph, closing comments:Fourth paragraph, closing comments:

Please remove the apostrophe from the first sentence (where it should read its) and instead move it to the second (which should be we're).

Otherwise excellent article. This is the kind of work I remember from years past that originally brought me to the site.

One thing - would it be too difficult to create a performance/watt chart based on a composite performance score for each single/pair of cards?

I do think you really pushed the 4850X2 a bit too much. The 9800GTX+ provides about the same level of performance (better in some cases, worse in others) and the SLI version manages to kick the crap out of the GTX 280/285 nearly across the board (with the exception of a couple of 2560x1600 memory-constricted cases) at a lower price point. That's actually in my mind one of the best performance values available today.

SiliconDoc - Wednesday, March 18, 2009 - link

Forget about Derek removing the apostrophe, how about removing the raging red fanboy ati drooling ?When the GTX260 SLI scores the 20 games runs of 21, and the 4850 DOESN'T, Derek is sure to mention not the GTX260, and on the very same page blab the 4850 sapphire "ran every test"...

This is just another red raging fanboy blab - so screw the apostrophe !

Nvidiai DISSED 'em because they can see the articles Derek posts here bleeding red all over the place.

DUH.