MultiGPU Update: Two-GPU Options in Depth

by Derek Wilson on February 23, 2009 7:30 AM EST- Posted in

- GPUs

Crysis Warhead Analysis

Crysis Warhead, the sequel to the original that follows the same story from a different perspective, does a great job of improving on the Crysis engine in terms of balancing performance and improving playability with a still-forward-looking engine (though we lack the native 64-bit runtime of the original game). We push the settings pretty high in spite of the fact that we don't turn them all the way up. Everything is set to "Gamer" quality with the exception of Shaders which are set to "Enthusiast" level.

1680x1050 1920x1200 2560x1600

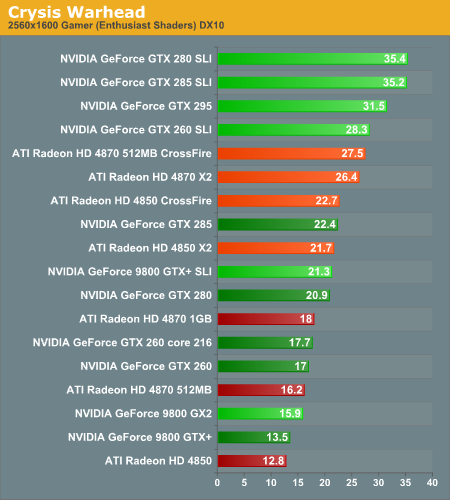

Like CoD, Crysis favors NVIDIA hardware. The settings we're rocking require more than a single Radeon HD 4850 or GeForce 9800 GTX+ even at 1680x1050, so it's likely that many gamers will be running at lower settings than these. As with CoD, SLI sweeps this benchmark in terms of performance. The Radeon HD 4870 CrossFire pushes up against the GeForce GTX 260 SLI setup, but the core 216 or an overclocked GTX 260 setup would easily put some distance between them.

1680x1050 1920x1200 2560x1600

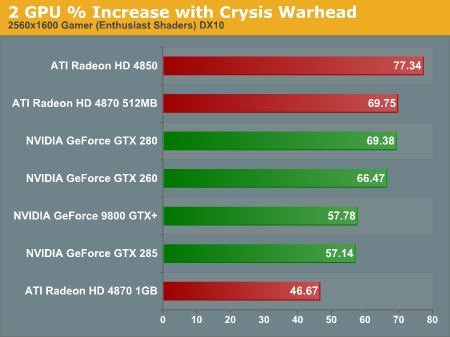

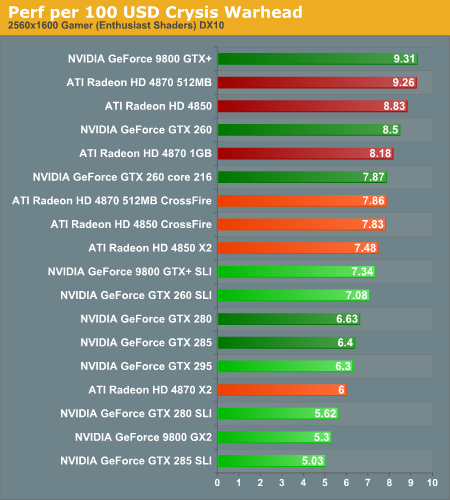

In terms of scaling, SLI looks better at lower resolutions while CrossFire puts the heat on as resolution increases. Despite the fact that the 4850 scales at over 77% (which is very good), the higher baseline of the NVIDIA cards keeps this from making the impact that it could. At the same time, configurations with two 4850 cards perform on par with the GTX 285 and offer much better value.

1680x1050 1920x1200 2560x1600

Our performance data and our value data show that in this case, AMD's approach to single card multiGPU on the high end is effective. The 4850 X2 2GB can be had from newegg for less than $300 which is more than $50 cheaper than a single GPU NVIDIA solution that gets you the same performance where it counts.

It's interesting to note that AMD two card solutions tended to scale better than the single card multi-GPU options here. Where memory is a limiter, we see our higher memory single card options scaling better. In this case, it looks like memory isn't as large a bottleneck as something else. We can't say for certain, but our guess is that it's the PCIe bus: with both cards getting a full x16 slot, each GPU is able to communicate more efficiently with the host and it seems that this is beneficial to Crysis performance.

At 1920x1200, the only single card solutions that remain playable are the GTX 280 and GTX 285. Getting good performance on a 30" monitor requires either a GTX 295 or 2x GTX 280/285s. Nothing else passes the test at the highest resolution we tested.

95 Comments

View All Comments

SiliconDoc - Wednesday, March 18, 2009 - link

Oh, I'm sorry go to that new technolo9gy red series the 3000 series, and get that 3780... that covers that GAP the reds have that they constantly WHINE nvidia has but DOES NOT.Yes, go back a full gpu gen for the reds card midrange...

GOOD GOLLY - how big have the reddies been lying? !?

HUGE!

MagicPants - Monday, February 23, 2009 - link

I know you're trying to isolate and rate only the video cards but the fact of the matter is if you spend $200 on a video card that bottlenecks a $3000 system you have made a poor choice. By your metrics integrated video would "win" a number of tests because it is more or less free.You should add a chart where you include the cost of the system. Also feel free to use something besides i7 965. Spending $1000 on a CPU in an article about CPU thrift seems wrong.

oldscotch - Monday, February 23, 2009 - link

I'm not sure that the article was trying to demonstrate how these cards compare in a $3000.00 system, as much as it was trying to eliminate any possibility of a CPU bottleneck.MagicPants - Monday, February 23, 2009 - link

Sure if the article was about pure performance this would make sense, but in performance per dollar it's out of place.If you build a system for $3000 and stick a $200 gtx 260 in it and get 30 fps you've just paid $106 ($3200/30fps)per fps.

Take that same $3000 dollar system and stick a $500 gtx 295 and assume you get 50 fps in the same game. Now you've paid just $70($3500/50fps) per fps.

In the context of that $3000 system the gtx 295 is the better buy because the system is "bottlenecking" the price.

OSJF - Monday, February 23, 2009 - link

What about micro stuttering in MultiGPU configurations?I just bought a HD4870 1GB today, the only reason i didn't choose a MultiGPU configuration was all the talkings about micro stuttering on german tech websites.

DerekWilson - Monday, February 23, 2009 - link

In general, we don't see micro stuttering except at high resolutions on memory intensive games that show average framerates that are already lowish ... games, hardware and drivers have gotten a bit better on that front when it comes to two GPUs to the point where we don't notice it as a problem when we do our hands on testing with two GPUs.chizow - Monday, February 23, 2009 - link

Nice job on the review Derek, certainly a big step up from recent reviews of the last 4-5 months. A few comments though:1) Would be nice to see what happens and start a step-back, CPU scaling with 1 GPU, 2 GPU and 3 CPU. Obviously you'd have to cut down the number of GPUs tested, but perhaps 1 from each generation as a good starting point for this analysis.

2) Some games where there's clearly artificial frame caps or limits, why wouldn't you remove them in your testing first? For example, Fallout 3 allows you to remove the frame cap/smoothing limit, which would certainly be more useful info than a bunch of SLI configs hitting 60FPS cap.

3) COD5 is interesting though, did you contact Treyarch about the apparent 60FPS limit for single-GPU solutions? I don't recall any such cap with COD4.

4) Is the 4850X2 still dependent on custom drivers from Sapphire? I've read some horror stories about official releases not being compatible with the 4850X2, which would certainly put owners behind the 8-ball as a custom driver would certainly have the highest risk of being dropped when it comes to support.

5) Would've been nice to have seen an overclocked i7 used, since its clearly obvious CPU bottlenecks are going to come into play even more once you go to 3 and 4 GPU solutions, while reducing the gain and scaling for the faster individual solutions.

Lastly, do you plan on discussing or investigating the impact of multi-threaded optimizations from drivers in Vista/Win7? You mentioned it in your DX11 article, but both Nvidia and ATI have already made improvements in their drivers, which seem to be directly credited for some of the recent driver gains. Particularly, I'd like to see if its a WDDM 1.0-1.1 benefit from multi-threaded driver that extends to DX9,10,11 paths, or if its limited strictly to WDDM 1.0-1.1 and DX10+ paths.

Thanks, look forward to the rest.

SiliconDoc - Wednesday, March 18, 2009 - link

Thank you very much." 2) Some games where there's clearly artificial frame caps or limits, why wouldn't you remove them in your testing first? For example, Fallout 3 allows you to remove the frame cap/smoothing limit, which would certainly be more useful info than a bunch of SLI configs hitting 60FPS cap.

3) COD5 is interesting though, did you contact Treyarch about the apparent 60FPS limit for single-GPU solutions? I don't recall any such cap with COD4.

4) Is the 4850X2 still dependent on custom drivers from Sapphire? I've read some horror stories about official releases not being compatible with the 4850X2, which would certainly put owners behind the 8-ball as a custom driver would certainly have the highest risk of being dropped when it comes to support.

"

#2 - a red rooster booster that limits nvidia winning by a large margin- unfairly.

#3 - Ditto

#4 - Ditto de opposite = this one boosts the red card unfairly

Yes, when I said "red fan boy" is all over Derek's articles, I meant it.

DerekWilson - Monday, February 23, 2009 - link

thanks for the feedback ... we'll consider some of this going forward.we did what we could to remove artificial frame caps. in fallout 3 we set ipresentinterval to 0 in both .ini files and framerate does go above 60 -- it just doesn't average above 60 so it looks like a vsync issue when it's an LOD issue.

we didn't contact anyone about COD5, though there's a console variable that's supposed to help but didn't (except for the multiGPU soluitons).

we're looking at doing overclocking tests as a follow up. not 100% on that, but we do see the value.

as for the Sapphire 4850 X2, part of the reason we didn't review it initially was because we couldn't get drivers. Ever since 8.12 we've had full driver support for the X2 from AMD. We didn't use any specialized drivers for that card at all.

we can look at the impact of multithreaded optimizations, but this will likely not come until DX11 as most of the stuff we talked about requires DX11 to work. I'll talk to NVIDIA and AMD about current multithreaded optimizations, and if they say there is anything useful to see in current drivers we'll check it out.

thanks again for the feedback

chizow - Monday, February 23, 2009 - link

Oh and specifically, much better layout/graph display with the resolution selections! :)