NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

Power and Power Management

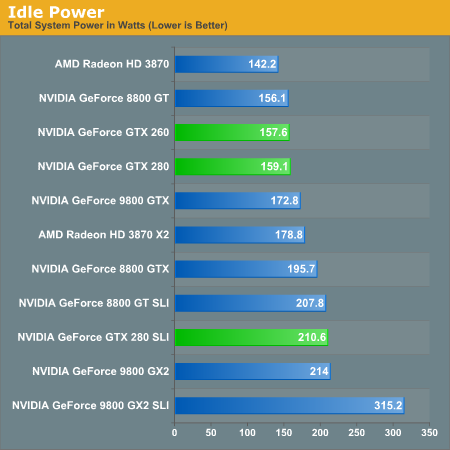

Power is a major concern of many tech companies going forward, and just adding features "because we can" isn't the modus operandi anymore. Now it's cool (pardon the pun) to focus on power management, performance per watt, and similar metrics. To that end, NVIDIA has beat their GT200 into such submission that it's 2D power consumption can reach as low as 25W. As we will show below, this can have a very positive impact on idle power for a very powerful bit of hardware.

These enhancements aren't breakthorugh technologies: NVIDIA is just using clock gating and dynamic voltage and clock speed adjustment to achieve these savings. There is hardware on the GPU to monitor utilization and automatically set the clock speeds to different performance modes (either off for hybrid power, 2D/idle, HD video, or 3D/performance). Mode changes can be done on the millisecond level. This is very similar to what AMD has already implemented.

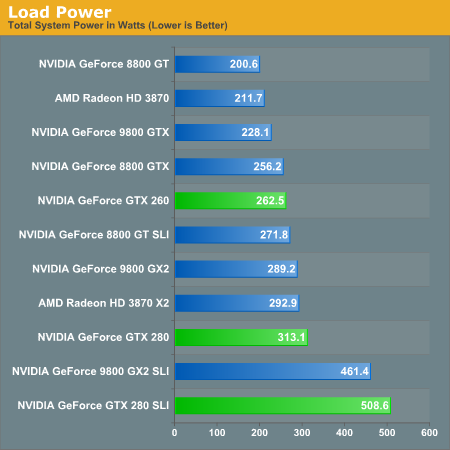

With increasing transistor count and huge GPU sizes with lots of memory, power isn't something that can stay low all the time. Eventually the hardware will actually have to do something and then voltages will rise, clock speed will increase, and power will be converted into dissapated heat and frames per second. And it is hard to say what is more impressive, the power saving features at idle, or the power draw at load.

There is an in between stage for HD video playback that runs at about 32W, and it is good to see some attention payed to this issue specifically. This bodes well for mobile chips based off of the GT200 design, but in the desktop this isn't as mission critical. Yes reducing power (and thus what I have to pay my power company) is a good thing, but plugging a card like this into your computer is like driving an exotic car: if you want the experience you've got to pay for the gas.

Idle power so low is definitely nice to see. Having high end cards idle near midrange solutions from previous generations is a step in the right direction.

But as soon as we open up the throttle, that power miser is out the door and joules start flooding in by the bucket.

Cooling NVIDIA's hottest card isn't easy and you can definitely hear the beast moving air. At idle, the GPU is as quiet as any other high-end NVIDIA GPU. Under load, as the GTX 280 heats up the fan spins faster and moves much more air, which quickly becomes audible. It's not GeForce FX annoying, but it's not as quiet as other high-end NVIDIA GPUs; then again, there are 1.4 billion transistors switching in there. If you have a silent PC, the GTX 280 will definitely un-silence it and put out enough heat to make the rest of your fans work harder. If you're used to a GeForce 8800 GTX, GTS or GT, the noise will bother you. The problem is that returning to idle from gaming for a couple of hours results in a fan that doesn't want to spin down as low as when you first turned your machine on.

While it's impressive that NVIDIA built this chip on a 65nm process, it desperately needs to move to 55nm.

108 Comments

View All Comments

Anand Lal Shimpi - Monday, June 16, 2008 - link

Thanks for the heads up, you're right about G92 only having 4 ROPs, I've corrected the image and references in the article. I also clarified the GeForce FX statement, it definitely fell behind for more reasons than just memory bandwidth, but the point was that NVIDIA has been trying to go down this path for a while now.Take care,

Anand

mczak - Monday, June 16, 2008 - link

Thanks for correcting. Still, the paragraph about the FX is a bit odd imho. Lack of bandwidth really was the least of its problem, it was a too complicated core with actually lots of texturing power, and sacrificed raw compute power for more programmability in the compute core (which was its biggest problem).Arbie - Monday, June 16, 2008 - link

I appreciate the in-depth look at the architecture, but what really matters to me are graphics performance, heat, and noise. You addressed the card's idle power dissipation but only in full-system terms, which masks a lot. Will it really draw 25W in idle under WinXP?And this highly detailed review does not even mention noise! That's very disappointing. I'm ready to buy this card, but Tom's finds their samples terribly noisy. I was hoping and expecting Anandtech to talk about this.

Arbie

Anand Lal Shimpi - Monday, June 16, 2008 - link

I've updated the article with some thoughts on noise. It's definitely loud under load, not GeForce FX loud but the fan does move a lot of air. It's the loudest thing in my office by far once you get the GPU temps high enough.From the updated article:

"Cooling NVIDIA's hottest card isn't easy and you can definitely hear the beast moving air. At idle, the GPU is as quiet as any other high-end NVIDIA GPU. Under load, as the GTX 280 heats up the fan spins faster and moves much more air, which quickly becomes audible. It's not GeForce FX annoying, but it's not as quiet as other high-end NVIDIA GPUs; then again, there are 1.4 billion transistors switching in there. If you have a silent PC, the GTX 280 will definitely un-silence it and put out enough heat to make the rest of your fans work harder. If you're used to a GeForce 8800 GTX, GTS or GT, the noise will bother you. The problem is that returning to idle from gaming for a couple of hours results in a fan that doesn't want to spin down as low as when you first turned your machine on.

While it's impressive that NVIDIA built this chip on a 65nm process, it desperately needs to move to 55nm."

Mr Roboto - Monday, June 16, 2008 - link

I agree with what Darkryft said about wanting a card that absolutely without a doubt, stomps the 8800GTX. So far that hasn't happened as the GX2 and GT200 hardly do either. The only thing they proved with the G90 and G92 is that they know how to cut costs.Well thanks for making me feel like such a smart consumer as it's going on 2 years with my 8800GTX and it still owns 90% of the games I play.

P.S. It looks like Nvidia has quietly discontinued the 8800GTX as it's no longer on major retail sites.

Rev1 - Monday, June 16, 2008 - link

Ya the 640 8800 gts also. No Sli for me lol.wiper - Monday, June 16, 2008 - link

What about noise ? Other reviews show mixed data. One says it's another dustblower, others says the noise level is ok.Zak - Monday, June 16, 2008 - link

First thing though, don't rely entirely on spell checker:)) Page 4 "Derek Gets Technical": "borrowing terminology from weaving was cleaver" I believe you meant "clever"?As darkryft pointed out:

"In my opinion, for $650, I want to see some f-ing God-like performance."

Why would anyone pay $650 for this? Ugh? This is probably THE disappointment of the year:(((

Z.

js01 - Monday, June 16, 2008 - link

On techpowerups review it seemed to pull much bigger numbers but they were using xp sp2.http://www.techpowerup.com/reviews/Point_Of_View/G...">http://www.techpowerup.com/reviews/Point_Of_View/G...

NickelPlate - Monday, June 16, 2008 - link

Pfft, title says it all. Let's hope that driver updates widen the gap between previous high end products. Otherwise, I'll pass on this one.