Noise and Heat

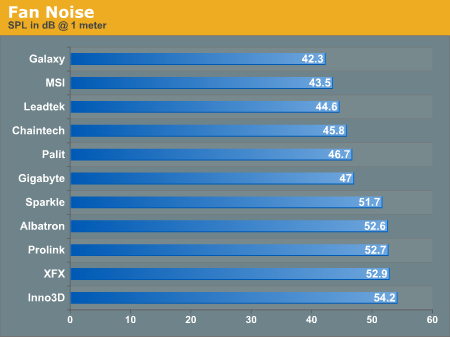

Here are the meat and potatoes of our comparison between the 11 Geforce 6600GT cards today. How quiet and efficient are the cooling solutions attached to the cards? We are about to find out.Our noise test was done using an SPL meter in a very quiet room. Unfortunately, we haven't yet been able baffle the walls with sound deadening material in the lab, and the CPU and PSU fans were still on as well. But in each case, the GPU fan was the loudest contributor to the SPL in the room by a large margin. Thus, the SPL of the GPU is the factor that drove the measured SPL of the system.

Our measurement was taken at 1 meter from the caseless computer. Please keep in mind when looking at this graph that everyone experiences sound and decibel levels a little bit differently. Generally, though, a one dB change in SPL translates to a perceivable change in volume. Somewhere between a 6 dB and 10 dB difference, people perceive the volume of a sound to double. That means the Inno3D fan is over twice as loud as the Galaxy fan. Two newcomers to our labs end up rounding out the top and bottom of our chart.

The very first thing to notice about our chart is that the top three spots are held by our custom round HSF solutions with no shroud. This is clearly the way to go for quiet cooling.

Chaintech, Palit, and Gigabyte are the quietest of the shrouded solutions, and going by our rule of thumb, the Palit and Gigabyte cards may be barely audibly louder than the Chaintech card.

The Albatron, Prolink, and XFX cards have no audible difference, and they are all very loud cards. The fact that the Sparkle card is a little over 1 dB down from the XFX card is a little surprising: they use the same cooling solution with a different sticker attached. Of course, you'll remember from the XFX page that it seems that they attached a copper plate and a pad to the bottom of the HSF. The fact that Sparkle's solution is more stable (while XFX has tighter pressure on the GPU from the springs) could mean the slight difference in sound here.

All of our 6600 GT solutions are fairly quiet. These fans are nothing like the ones on larger models, and the volume levels are nothing to be concerned about. Of course, the Inno3D fan did have a sort of whine to it that we could have done without. It wasn't shrill, but it was clearly a relatively higher pitch than the low drone of the other fans that we had listened to.

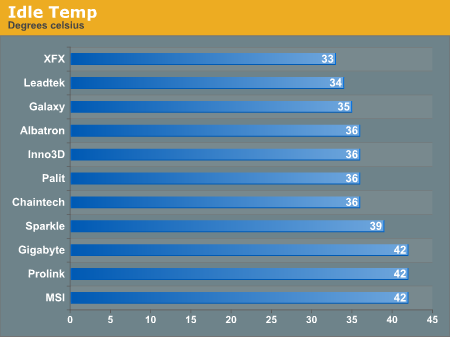

NVIDIA clocks its 6600 GT cores at 300 MHz when not in 3D mode, and since large sections of the chip are not in use, not much power is needed, and less heat is dissipated than if a game were running. But there is still work going on inside the silicon, and the fan is still spinning its heart out.

Running the coolest is the XFX card. That extra copper plate and tension must be doing something for it, and glancing down at the Sparkle card, perhaps we can see the full picture of why XFX decided to forgo the rubber nubs on their HSF.

The Leadtek and Galaxy cards come in second, pulling in well in two categories.

We have the feeling that the MSI and Prolink cards had their thermal tape or thermal glue seals broken at some point at the factory or during shipping. We know that the seal on the thermal glue on the Gigabyte card was broken, as this card introduced us to the problems with handling 6600 GT solutions that don't have good 4 corner support under the heatsink. We tried our best to reset it, but we don't think that these three numbers are representative of what the three companies can offer in terms of cooling. We will see similar numbers in the load temp graphs as well.

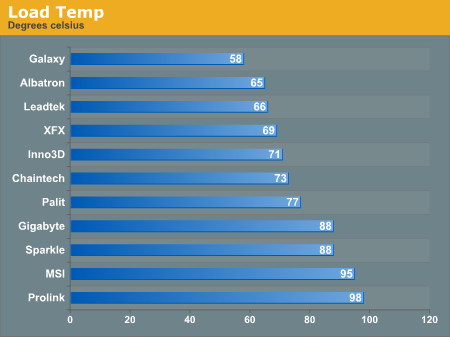

Our heat test consists of running a benchmark over and over and over again on the GPU until we hit a maximum temperature. There are quite a few factors that go into the way a GPU is going to heat up in response to software, and our goal in this test was to push maximum thermal load. Since we are looking at the same architecture, only the particular variance in GPU and the vendor's implementation of the product are factors in the temperature reading we get from the thermal diode. These readings should be directly comparable.

We evaluated Doom 3, Half-Life 2, 3dmark05, and UT2K4 as thermal test platforms. We selected resolutions that were not CPU bound but had to try very hard not to push memory bandwith beyond saturation. Looping demos in different levels and different resolutions with different settings while observing temperatures gave us a very good indication of the sweet spot for putting pressure on the GPU in these games, and the winner for the hardest hitting game in the thermal arena is: Half-Life 2.

The settings we used for our 6600 GT test were 1280x1024 with no AA and AF. The quality settings were cranked up. We looped our at_coast_12-rev7 demo until a stable maximum temperature was found.

We had trouble in the past observing the thermal diode temperature, but this time around, we setup multiple monitors. Our second monitor was running at 640x480x8@60 in order to minimize the framebuffer impact. We kept the driver open to the temperature panel on the second monitor while the game ran and observed the temperature fluctuations. We still really want an application from NVIDIA that can record these temperatures over time, as the part heats and cools very rapidly. This would also eliminate any impact from running a second display. Performance impact was minimal, so we don't believe temperature impact was large either. Of course, that's no excuse for not trying to do thing in the optimal way. All we want is an MBM5 plugin, is that too much to ask?

It is somewhat surprising that Galaxy is the leader in handling load temps. Of course, the fact that it was the lightest overclock of the bunch probably helped it a little bit, but most other cards were running up at about 68 degrees under load before we overclocked them. The XFX card, slipping down a few slots from the Idle clock numbers with its relatively low core overclock, combined with the fact that our core clock speed leader moves up to be the second coolest card in the bunch, makes this a very exciting graph.

For such a loud fan, we would have liked to see Inno3D cool the chip a little better under load, but their placement in the pack is still very respectable. The Sparkle card again shows that XFX had some reason behind their design change. The added copper bar really helped them even though they lost some stability.

The Gigabyte (despite my best efforts to repair my damage), MSI, and Prolink cards were all way too hot, even at stock speeds. I actually had to add a clamp and a rubber band to the MSI card to keep it from reaching over 110 degrees C at stock clocks. The problem was that the thermal tape on the RAM had come loose from the heatsink. Rather than having the heatsink stuck down to both banks of RAM as well as the two spring pegs, the heatsink was lifting off of the top of the GPU. We didn't notice this until we started testing because the HSF had pulled away less than a millimeter. The MSI design is great, and we wish that we could have had an undamaged board. MSI could keep this from happening if they put a spacer between the board and the HSF on the opposite side from the RAM near the PCIe connectors.

84 Comments

View All Comments

Bonesdad - Wednesday, February 16, 2005 - link

Yes, I too would like to see an update here...have any of the makers attacked the HSF mounting problems?1q3er5 - Tuesday, February 15, 2005 - link

can we please get an update on this article with more cards, and replacements of defective cards?I'm interested in the gigabyte card

Yush - Tuesday, February 8, 2005 - link

Those temperature results are pretty dodge. Surely no regular computer user would have a caseless computer. Those results are only favourable and only shed light on how cool the card CAN be, and not how hot they actually are in a regular scenario. The results would've been much more useful had the temperature been measured inside a case.Andrewliu6294 - Saturday, January 29, 2005 - link

i like the albatron best. Exactly how loud is it? like how many decibels?JClimbs - Thursday, January 27, 2005 - link

Anyone have any information on the Galaxy part? I don't find it in a pricewatch or pricegrabber search at all.Abecedaria - Saturday, January 22, 2005 - link

Hey there. I noticed that Gigabyte seems to have modified their HSI cooling solution. Has anyone had any experience with this? It looks much better.Comments?

http://www.giga-byte.com/VGA/Products/Products_GV-...

abc

levicki - Sunday, January 9, 2005 - link

Derek, do you read your email at all? I got Prolink 6600 GT card and I would like to hear a suggestion on improving the cooling solution. I can confirm that retail card reaches 95 C at full load and idles at 48 C. That is really bad image for nVidia. They should be informed about vendor's doing poor job on cooling design. I mean, you would expect it to be way better because those cards ain't cheap.levicki - Sunday, January 9, 2005 - link

geogecko - Wednesday, January 5, 2005 - link

Derek. Could you speculate on what thermal compound is used to interface between the HSF and the GPU on the XFX card? I e-mailed them, and they won't tell me what it is?! It would be great if it was paste or tape. I need to be able to remove it, and then later, would like to re-install it. I might be able to overlook not having the component video pod on the XFX card, as long as I get an HDTV that supports DVI.Beatnik - Friday, December 31, 2004 - link

I thought I would add about the DUAL-DVI issue, in the new NVIDIA drivers, they show that the second DVI can be used for HDTV output. It appears that even the overscan adjustments are there.

So not having the component "pod" on the XFX card appears to be less of a concern than I thought it might be. It would be nice to hear if someone tried running 1600x1200 + 1600x1200 on the XFX, just to know if the DVI is up to snuff for dual LCD use.